A tool like this Olympus Delta X-ray fluorescence (XRF) metals and alloys analyzer is likely to be used on Mars in order to determine the mass ratio of different elements in samples of rock collected on the Martian surface.

Latest News

July 31, 2017

NASA is currently on a mission to send humanity deeper into the Solar System than ever before. This includes the completion of programs like the Orion capsule and the Space Launch System. Completing these programs requires the creation of new training and new procedures that astronauts will have to learn.

It is important to find ways to reduce the impact on cost and schedule while still maintaining the efficacy of traditional astronaut training methods, especially when it comes to the exploration of Mars, where missions are expected to last months or years at a time. NASA engineers can take advantage of immersive environment technologies to see how to make the training experience feel as realistic as possible while running various simulations.

In 2015 NASA founded the Hybrid Reality Lab to combine consumer virtual reality technology and tracked 3D objects (locating an object in 3D space using object tracking technology) in order to make realistic visuals and tactile feedback, giving a much stronger and better sense of immersion. The lab uses off the shelf VR headsets, and Unreal Engine 4 (a commercial game engine supporting advanced rendering, physics, and networking capabilities), and NASA-specific content to create training environments.

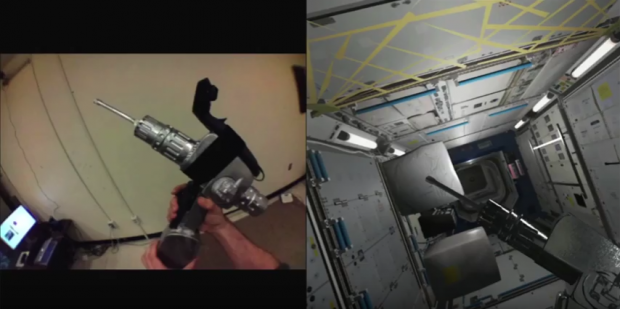

A hybrid reality simulation exercise aimed at training how to use a drill at the International Space Station. The drill was 3D scanned and placed into the hybrid reality environment. The moving parts, such as the drill bit, are animated only in hybrid reality for safety reasons. Haptic feedback technology is used to vibrate the controller and make the trainee feel like they are actually drilling.

A hybrid reality simulation exercise aimed at training how to use a drill at the International Space Station. The drill was 3D scanned and placed into the hybrid reality environment. The moving parts, such as the drill bit, are animated only in hybrid reality for safety reasons. Haptic feedback technology is used to vibrate the controller and make the trainee feel like they are actually drilling.A major goal is to simulate reduced gravity and the sense of tactile feedback. Right now a sister branch at NASA’s Johnson Space Center operates the Active Response Gravity offload system (ARGOS).

“It is essentially a smart tether which attaches to your back, offloads your body weight and accounts for your momentum in the vertical and horizontal directions to make you feel like you are in Lunar gravity, Martian gravity, microgravity or anywhere in between,” says Matthew Noyes, Software Lead at NASA’s Hybrid Reality and Advanced Operational Concepts Lab.

The Hybrid Reality Lab environment has been merged with the ARGOS system, so the user is able to move around a virtual representation of the International Space Station environment by pulling themselves through the 3D world. By doing this the virtual ISS appears to move around them while they wear the virtual headset.

A video game based simulation exercise that allows you to feel like a Mars astronaut. The trainee is performing visual inspection of a vehicle before starting to operate it.

A video game based simulation exercise that allows you to feel like a Mars astronaut. The trainee is performing visual inspection of a vehicle before starting to operate it.A series of applications have been created to showcase some of the power of Hybrid Reality, and the International Space Station was the first one. It is a dynamic simulation of most of the interior modules frequented by American astronauts.

Multiple users can train together inside virtual reality, which means that they can work together even if they are on completely opposite sides of the world. It also opens the opportunities for doing more outreach activities, because people at home with their own consumer headsets could log into a server and watch astronauts training in real time in a much more exciting way that can be achieved with regular NASA TV.

A tool like this Olympus Delta X-ray fluorescence (XRF) metals and alloys analyzer is likely to be used on Mars in order to determine the mass ratio of different elements in samples of rock collected on the Martian surface.

A tool like this Olympus Delta X-ray fluorescence (XRF) metals and alloys analyzer is likely to be used on Mars in order to determine the mass ratio of different elements in samples of rock collected on the Martian surface.NASA has a lot of existing space assets, like a space drill called the Pistol Grip Tool (PGT) used for drilling bolts. There are also many other tools under development that will be used on future missions, like an X-Ray Fluorescence (XRF) tool that is used to determine rock/soil composition on Mars and is based on tools that are commercially available for use.

“Now, when collecting samples on the Martian surface you would most likely use a tool like this to tell you what the mass ratio of the different elements inside that sample is, and using 3D scanning we’re able to make this model look extremely realistic, so you can learn how to use the tool inside virtual reality,” says Matthew Noyes.

This tool (and others) underwent 3D scanning using the Artec Eva in order to produce highly realistic textured models and import them to a virtual environment for simulation training.

A 3D model of the Delta XRF analyzer made with Artec Space Spider 3D scanner. The model is used in NASA’s hybrid reality simulation exercises to train future astronauts how to handle tools and machines on Mars. In hybrid reality, the trainee can feel the device’s weight under different gravity conditions and get tactile feedback from the device when pressing buttons on it.

A 3D model of the Delta XRF analyzer made with Artec Space Spider 3D scanner. The model is used in NASA’s hybrid reality simulation exercises to train future astronauts how to handle tools and machines on Mars. In hybrid reality, the trainee can feel the device’s weight under different gravity conditions and get tactile feedback from the device when pressing buttons on it.“Rather than painstakingly modeling these tools/assets from physical measurements and photographs, or working from textureless CAD files, we thought that using a 3D scanner to create millimeter-precise models from real objects was a much better solution,” says Matthew Noyes.

Along with the Eva scanner, the lab also uses Artec Studio software for creating a point cloud, defeaturing noisy elements, and texturing the resulting mesh.

“The Hybrid Reality Lab uses the Artec Eva scanner due to its use of white LEDs for illumination, which makes it eye-safe,” says Frank Delgado, Project Lead at NASA’s Hybrid Reality and Advanced Operational Concepts Lab. “The Artec also met our accuracy requirements, is easy to use, and provided a very good solution for scanning small and medium-sized objects.”

In addition to creating 3D scans of the various tools/instruments, their digital interface is also recreated and the sound effects were captured from the device in order to make the VR representation look and behave as close as possible to the real object.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News