Latest News

May 31, 2009

By Bob Cramblitt

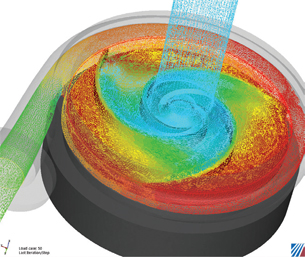

View of internal flow patterns of Cornell’s centrifugal pump modeled with CFdesign. It also highlights the liquid pressure. |

For most engineers, performance claims for cluster computing have been like Bill Gates’ money or LeBron James’ basketball ability: fascinating, but at the outer limits of the imagination. But after years of extraordinary results in the experimental realm, high-performance computing (HPC) is becoming a reality for the everyday engineer. At least it has for Cornell Pump Co.

The more than 50-year-old company, based in Clackamas, OR, has seen a 100X performance increase in its upfront CFD simulations using real models. The performance is made possible by a combination of new HPC and motion modules available for CFdesign simulation software and cluster computing configured by R Systems on Windows HPC Server 2008.

“We now have the ability to go from 200 hours of compute time to generate a pump-curve simulation to only two hours,” says Andrew Enterline, design engineer at Cornell Pump.

Combined Expertise in Speed

The HPC project began when Cornell Pump got in touch with Brian Kucic, founder of R Systems, to find out how he might help speed up the pump curve simulations that Cornell uses to evaluate designs. Kucic contacted Blue Ridge Numerics, maker of CFdesign, to help optimize its software on R Systems’ HPC environment.

R Systems, a spin-off of the National Center for Supercomputing Applications (NCSA), is not the typical seller of server time. The company digs in deeper, partnering with clients and independent software vendors (ISVs) to determine the best configuration for maximizing performance of its applications.

Blue Ridge Numerics is also in the business of speed, but from a different perspective. Its CFdesign software enables designers to put fluid flow and heat-transfer simulation up front in the product development process, cutting the time and expense associated with physical prototyping and testing, and enabling more time and resources for product innovation.

From 1.5X to 100X

It typically takes about 300 iterations to provide enough data for Cornell to evaluate a pump design. At about 1.5 iterations per hour before the HPC project, evaluation of a single design took around 200 hours.

Blue Ridge engineers worked with Greg Keller, R Systems’ chief technology officer, to install CFdesign on an R Systems’ cluster, initially on a system with three nodes and 24 cores. Enterline then ran simulations for a centrifugal pump in development for the agricultural market. Initial results showed a 1.5 times speed-up. Enterline was happy with the results, as it would decrease computing time for each pump curve from about 200 hours to 136 hours. But, Blue Ridge and R Systems had much loftier goals.

“In our testing with the new CFdesign HPC and motion modules, we saw very exciting performance gains,” says Ed Williams, Blue Ridge Numerics’ CEO.

After a few test jobs to verify performance and scalability, R Systems ran the simulations on eight nodes of its Microsoft Windows HPC 2008 cluster. The system included a Dell 1950 Intel 2.66GHz Harpertown Quad Core with 16GB of RAM per node, double date rate Infiniband, 73GB of local disk space, and 1TB of global storage.

Test results showed that the 300 iterations typically needed to evaluate a design could be done in two hours with the combination of the new CFdesign HPC and motion modules and the R Systems’ cluster.

To an outsider, the 100X performance increase might sound suspect, but Williams knew what to expect.

“I told Cornell to expect about a 20X speed-up, but I thought that we would see more like 100X,” says Williams. “About 20X is coming from the new CFdesign algorithms in the motion module, a performance increase that customers would see on their desktop box as well. Cornell also got the 4-5X improvement ... combining the HPC module with R Systems’ HPC configuration, yielding the 100X improvement.”

HPC: No Longer a Big Cost

Although the dramatic results were aided greatly by R Systems’ expertise and hardware systems, Williams says that even small, inexpensive clusters can generate major performance improvements.

“HPC brings huge value and offers many configuration alternatives for companies of any size,” he says. “Even a modest two-computer Windows HPC mini-cluster with our HPC module creates enough horsepower to speed up solution times by 400 percent.”

More Time Designing

For Cornell Pumps, the performance increases from HPC and new rotational algorithms for CFdesign will have major implications for development of new pumps. Beyond cutting computer simulation time from weeks to hours, it is expected to reduce the number of pump castings that must be made for physical testing.

A physical test for a Cornell Pump typically requires five weeks to make a casting pattern, six or seven weeks of foundry time to make the parts, a couple of weeks to machine the parts, and a week or two for testing. Eliminating just one of the physical tests could save as much as three months of development time.

“With much faster running speeds, we will be able to do many more simulation runs in less time,” says Enterline. “We’ll spend more time designing the product and less time and money in pattern rework, re-pouring castings, and physical development testing—helping us create more optimized product designs from the start.”

More Info

Blue Ridge Numerics

Charlottesville, VA

Cornell Pump Co.

Clackamas, OR

R Systems

Champaign, IL

Bob Cramblitt is a writer based in Cary, NC, who focuses on technologies that make major differences in the way companies design, engineer, and manufacture. Send e-mail about this article to [email protected].

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

DE’s editors contribute news and new product announcements to Digital Engineering.

Press releases may be sent to them via [email protected].