Choose the Right Workstation Hardware for Maximum Performance and Value

The HP Z1 was billed as the first all-in-one workstation when it was released in 2012. It features a custom line of NVIDIA Quadro GPUs designed especially for all-in-one workstations.

Latest News

June 1, 2014

p>

The HP Z1 was billed as the first all-in-one workstation when it was released in 2012. It features a custom line of NVIDIA Quadro GPUs designed especially for all-in-one workstations.

The HP Z1 was billed as the first all-in-one workstation when it was released in 2012. It features a custom line of NVIDIA Quadro GPUs designed especially for all-in-one workstations.Editor's Note: This is the first part of Desktop Engineering's series on high-performance computing options.

Fighter pilots have a saying, “Speed is Life,” yet there is a lot more to speed than just going fast, something that engineers have found out when it comes to the design process. Today’s engineers are realizing that a whole host of technologies must be integrated to deliver productivity and are quickly discovering that effectively performing CAD/CAM and simulation work takes more than just clock cycles on a workstation. In other words, it takes more than just raw speed to maximize performance.

However, many engineers are still following the pied piper of advertising and selecting their workstations based upon marketing speeds and feeds, and not the actual components. While some of that ignorance can be attributed to vendors and manufacturers not providing enough actionable information about their technologies, most of the blame lies squarely with the individuals specifying systems and ultimately purchasing the wrong tool for the job.

Luckily, this issue can be solved by replacing ignorance with knowledge about workstation hardware and then performing the appropriate due diligence necessary, before signing off on a purchase order; a process that usually begins with the engineers seeking the best tools for the tasks at hand. Those “best tools” come in a variety of configurations, and selecting the appropriate configuration takes knowledge, research and validation. After all, the ideal solution should add up to more than just the sum of its parts and should deliver the desired performance level, but not forsake economy.

Workstations vs. High- Performance PCs

In the past, it was pretty easy to distinguish a workstation from a PC. Workstations tended to be power hungry processing appliances that delivered the raw power needed to do complex mathematical tasks and deliver engineering insight into complex problems. Those workstations often ran specialized operating systems, leveraged specialized hardware subsystems and proved to be very costly to own and operate.

On the other hand, PCs were thought of as business machines, used for more simplistic tasks, including data entry, word processing and perhaps even some mathematical analysis using software such as MS Excel or a desktop database. However, Moore’s law came into play and PCs become powerful tools in their own right, sporting highperformance CPUs, top notch graphics cards, high-speed storage devices and oodles of fast memory—seeming to make a high-performance PC a poor man’s workstation, blurring the lines between what constitutes a dedicated workstation or a power user’s PC.

“With processor technology and storage capacity on the rise, many of today’s high end PCs are able to effectively process the work loads of previous generation workstations,” says NVIDIA’s Senior Technical Marketing Manager, Sean Kilbride. “However, workstations tend to have several design advantages over high-powered PCs, the primary elements being reliability and expandability.”

Kilbride is referring to the fact that most workstations are designed to run 24/7 under heavy loads and incorporate higher quality hardware to increase reliability. Donald Maynard, senior product manager at Dell explains how enhanced reliability differs on workstations, “Dell’s Precision Workstations incorporate Reliable Memory Technology, which automatically detects failures in DIMMs [dual in-line memory modules] and removes that section of memory from use, allowing engineers to continue to use the workstation in the event of a partial memory failure, where PCs would probably just become inoperative.”

Maynard adds “Many of Dell’s Precision Workstations also support ease of expansion. For example, our dual socket machines can be shipped as single socket units, and later upgraded with an additional CPU. Dell’s design attaches memory to the CPU, so adding a second CPU also increases system memory.”

Maynard and Kilbride point out that while high-end PCs can do many of the routine chores engineers have come to expect from a workstation—workstations still offer advantages when it comes to working with engineering problems.

CPUs, GPUs and APUs

Just a short time ago, the CPU was considered to be the primary component for judging performance. However, CPUs have evolved and other technologies have come into play, such as, multiple cores, GPUs, APUs, cache sizes and more that help to make CPUs more efficient and even shift much of the processing load away from the traditional CPU.

However, CPUs, GPUs and APUs come from chip manufacturers, such as Intel, AMD and NVIDIA and each company offers what they feel is the ideal technology for maximum processing power. Choosing a CPU is an important consideration, and determining how that CPU directly benefits the task at hand has become trickier, shifting the focus to selecting the proper workstation for a dedicated task, as opposed to selecting processors that may offer the most versatility across multiple types of projects.

Intel’s Segment Manager for Workstations, Wes Shimanek says “having a workstation with a lot of processing cores and memory is critical for simulation work; however you must make sure that you have the bandwidth to feed the processors.”

Shimanek adds “The Intel Xeon processor is designed to move data around and when it comes to high end processes, such as ray tracing and data visualization, those processes and their related algorithms are best run on a CPU based system, as opposed to GPU systems.”

Kilbride offers a different take on performance, “Our Maximus technology combines NVIDIA’s Quadro GPU technology with NVIDIA’s Tesla companion processor—a combination that allows engineers to perform graphics and compute intensive processing at the same time on their workstations.”

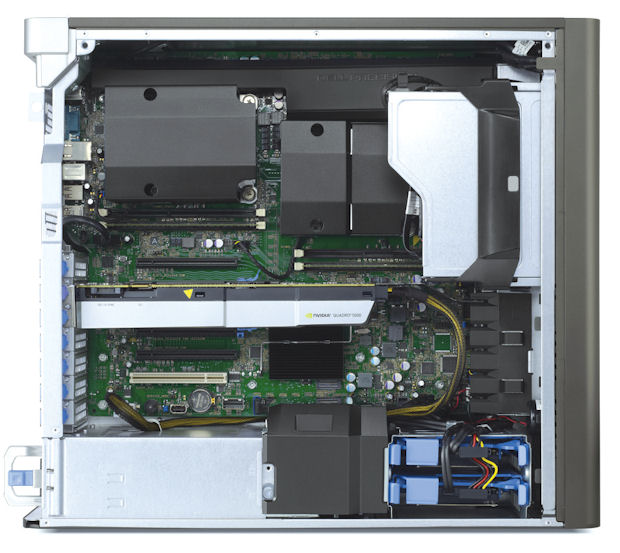

The Dell Precision T7610 Workstation can house up to two Intel Xeon Processor E5-2600 v2s with up to 24 cores (12 per processor), up to 512GB of 1866MHz ECC RAM and up to three graphic cards.

The Dell Precision T7610 Workstation can house up to two Intel Xeon Processor E5-2600 v2s with up to 24 cores (12 per processor), up to 512GB of 1866MHz ECC RAM and up to three graphic cards.Kilbride added “Maximus frees the system CPU to carry out all the functions that it needs to do, while Maximus accelerates rendering and other processes using GPU technology. The end result proves to be significant time savings and the ability to work with ever more complex engineering problems.”

NVIDIA claims that Maximus offers significant processing gains for applications that use the technology. For example, NVIDIA claims a 21x performance increase for Adobe Premiere and 39x increase for Adobe After Effects.

However, Intel and NVIDIA are not the only companies paving the path to high performance—AMD offers its APU (Accelerated Processing Units) under its FirePro brand. AMD’s Demir Ali, senior field application Engineer, says “The AMD FirePro brand graphics cards are optimized and tuned to work with high end design applications and offer innovations such as the ability to work with six displays concurrently.”

Matthias Willecke, AMD senior business development manager adds “the FirePro GPUs can send data in both directions at full PCI Express 3.0 speed. Furthermore, the memory bandwidth of the AMD FirePro has increased 64GB/s to 103GB/s, which allows large CAD models to be loaded faster, also the architecture supports performing computational tasks in parallel for graphics operations, which delivers significant advantages for simulations.”

Obviously, these different takes from primary vendors in the CPU/GPU/APU market space can create uncertainty as to which technology to choose. That said, decisions can be simplified by thoroughly researching what software works with what hardware and choosing appropriately. After all, you can purchase the fastest technology available, yet that technology becomes worthless if it is incompatible or not supported by the primary engineering applications you use.

Storage and Memory

There is more to workstation selection than just processing power, GPU performance and software vendor recommendations—there are a whole host of other components that can add to or detract from the efficiency of processing complex computational tasks on workstations. Take for example the role system memory plays in computational operations—where not only the quantity of RAM makes a difference, but also the quality of RAM as well.

“A workstation is much more than the sum of its parts, important configuration concerns should be addressed when selecting a Workstation, such as the usage of ECC (Error Correction Code) RAM,” says Brett Newman, who is in HPC sales and marketing at Microway. “ECC RAM can detect and correct single bit errors, which prevents memory corruption and possible system failures.”

Because professionals use their computers more, they are more likely to be affected by RAM errors. Simply put, technologies such as ECC RAM improve reliability and can prevent computational errors. Many consumer-level computers don’t support ECC RAM, but professional workstation do.

But how much RAM to specify? Here, the age-old axiom of “more is better” is not always true—especially when there are budget considerations. For most engineers, following the recommendation guidelines set forth by software manufacturers proves to be one of the best places to start.

For example, SolidWorks specifies a minimum of 1GB RAM, but suggests 6GB—yet, 6GB may not be the best choice, especially if other applications are going to be run. Considering how inexpensive memory has become as of late—perhaps the best guideline is to buy a bit beyond the recommended amount to help future proof the workstation.

However, one should always leave room for expansion—in other words, if a workstation comes with 32GB RAM, check to see that it can be upgraded to more at a later date. When adding additional fuel to the RAM purchasing fire, you need to consider how an application is being used. There are many factors that can create the need for additional RAM and even more graphics processing horsepower. Considerations such as working with large assemblies and complex parts (such as plant and routing design), rendering and running first-pass finite-element and kinematic analyses can all have an impact on the amount of RAM or processing power needed.

Those considerations aside, there is still one more important configuration element to consider when selecting a workstation for advanced design work—and that comes in the form of storage. Until recently, solid state drives were thought of as too expensive and too low of a capacity to be useful for professional workstations, where very large capacity high speed SA-SCSI HDDs ruled the day. However SSDs have fallen in price and risen in capacity while achieving high factors of reliability. Engineers should not just rush out and buy any old SSD—there are significant differences between consumer level and enterprise level SSDs, namely performance and endurance.

Naturally, performance is important, but it is not just a single measurement of throughput—one has to take into account both sustained and random read/write speeds, which may differ across brands and models. However, there are limits to overall performance, as seen in the bottleneck presented by the PCI Express 3.0 Interface, which tops out at 6Gbit/s (600MB/s).

The importance of performance can be quickly overshadowed by the importance of reliability—where endurance is becoming exceedingly important for advanced computational workloads. One should expect the same level of reliable performance throughout the full life of the SSD and be confident that all the data is safe. Unfortunately, endurance is very hard to quantify, made harder by the fact that there is no industry measurement standard.

Drives are often rated in terms of Terabyte Written (TBW) or Program and Erase cycles. The two can be linked by the Write Amplication Factor (WAF). Both of which prove to be almost meaningless in determining the true, expected lifespan of an SSD. However, engineers should find some comfort in the five-year warranty offered on most professional SSDs—while the majority of mainstream consumer SSDs only offers a single year of protection.

What’s more, there are additional benefits that SSDs offer over mechanical drives beyond raw read/write performance gains. The biggest performance advantage over an HDD comes in the form of heat output—SSDs generate very little heat and consume significantly less power, thanks to a design that has no moving parts.

SSDs are also exceptionally quiet and can significantly help in keeping work areas quieter and potentially more productive. Noisy traditional HDDs have other disadvantages, and drives running full time in a RAID array, adds to the overall noise of the workstation and while increasing the heat loads on CPU, GPU and other cooling fans.

More Than its Parts

The plethora of technologies, applications and choices has made it difficult to pick what one would consider to be the best workstation for their purposes. However, by focusing on the critical elements and observing how a workstation performs as a whole should help to narrow down the selection process. For engineers, it all comes down to using the right tool for the job and avoiding the economic pressures to buy inferior tools to just save a few dollars upfront.

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Frank OhlhorstFrank Ohlhorst is chief analyst and freelance writer at Ohlhorst.net. Send e-mail about this article to [email protected].

Follow DE