Cutting the Cord

Virtual and augmented reality tools are changing the human/computer interface. But will designers benefit?

Latest News

December 1, 2010

By Brian Albright

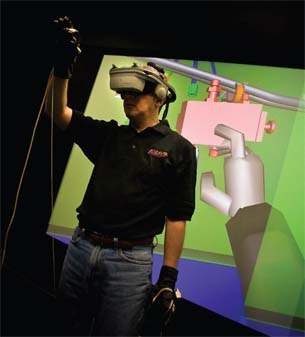

Virtual reality is already being used by engineers to help make better design decisions by interacting with their designs Image courtesy of NIAR. |

For years, technology companies have been moving us closer to a “Star Trek” vision of the human computer interface, where computers respond to hand signals and voice commands, and people can interact with virtual objects.

Virtual reality (VR) has, well, been a reality in design and engineering for a number of years, often used in product development and design review. Users armed with sophisticated 3D glasses can interact with models on a PC, a powerwall or in a cave automatic virtual environment (CAVE). New advancements in ray tracing have made it possible to create highly realistic virtual prototypes, and major CAD and product lifecycle management (PLM) players provide visualization tools that can provide companies with a more realistic way to review designs before investing in a physical prototype.

This evolution has been enabled by the increase in computational and graphics power of design applications, and the hardware on which they run.

“What used to take hours now takes under a minute, and it can be completely interactive,” says Phil Miller, director of product management for professional software at NVIDIA. “That’s changing what’s possible. Instead of waiting over lunch to see a rotation, you can play with the model and see the results interactively.”

But while the technology used to generate virtual prototypes has reached new levels of sophistication, the way designers and engineers interact with these models hasn’t changed much. New interface technologies have been developed in the VR space—haptic gloves, 3D headsets, motion control sensors—but for the most part, designers still work with a mouse and keyboard.

“The mouse and keyboard are quite powerful, that’s the thing,” says Austin O’Malley, executive vice president of R&D at Dassault Systèmes SolidWorks. “The entire office and desktop are designed around it. Most computer applications are not developed for use with other inputs, and they require a keyboard or mouse to augment those input devices if you need to input a lot of data or get a precise selection. They really don’t blend well with other devices, and that’s why other input devices don’t get the kind of usage you might think they would. They are not good at inputting text.”

That could be about to change. With gaming applications and advanced mobile phones like the iPhone and Android devices driving down the price of the technology, and processing speeds increasing, more designers may be able to cut the cord on their mouse—at least some of the time.

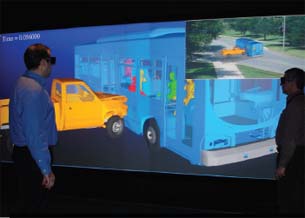

3D allows designers to see their designs in a different way. Image courtesy of NIAR. |

By providing a more natural way to interact with models, designers could get a much better idea of how objects will look, feel and operate in the real world. They could also potentially spot design issues earlier in the process by seeing what impact an object will have on its real-world environment.

“Can we review the design together and more naturally interact with it, rather than constantly walking over to a keyboard?” O’Malley says. “Touch devices and 3D viewing devices are interesting examples of how people could collaborate in the not-too-distant future.”

While virtual reality and 3D are now used for visualizing and simulating design, future technologies may allow engineers to easily access virtual front-end design tools. Image courtesy of NIAR. |

What will ultimately drive adoption, however, will be a combination of low cost and utility.

“You have to offer a magnitude of difference in terms of efficiency to move away from that paradigm,” says Sandy Joung, senior director, product marketing at PTC. “There has to be a compelling benefit, and from there it will come down to ease of use and interoperability.”

Out of the Lab

James Oliver, professor of mechanical engineering and director of the Virtual Reality Applications Center (VRAC’s) at Iowa State, says there is a significant opportunity for new interface technologies to improve the early stages of design work.

“There is an opportunity gap, from a researcher’s point of view,” he says. “Not a lot has gone into the front end of design, in particular conceptual design. We still have engineers using whiteboards and Excel spreadsheets and the backs of napkins.”

At the VRAC, researchers are already testing some of the more outré human computer interface options, including haptics, touchscreens and motion tracking—sometimes using repurposed equipment from gaming applications. The U.S. Air Force, Boeing, Rockwell, John Deere and other companies leverage the VRAC for product development and manufacturing simulations.

A lot of this work is focused on simulations and prototyping, but Oliver says there is also a focus on that early part of design. Decisions made in the early stages of the process can lock companies into a lot of expense later in the process. At this stage, designers are usually more interested in whether option A is better than option B than in detailed analysis. But by allowing them to quickly manifest 3D renderings using a library of existing parts data, Oliver says designers “can keep more concepts alive in the pre-design process before they are cut into stone with a full CAD development.”

Fernando Toledo, manager of the Virtual Reality Center at the National Institute for Aviation Research (NIAR), says VR tools are helping manufacturers work through design reviews by reducing the number of physical models that must be created.

CyberGlove Systems’ products include support for a number of CAD applications. |

“Using virtual reality, you can test different configurations of overhead bins, for example,” Toledo says. “You can see different shapes and styles. These things would take too long if you had to build physical models. But it took awhile for engineers to see that it could help them to improve design decisions.”

Beyond the Keyboard

Many of the interface tools used in VR and augmented reality (AR) environments have become more sophisticated and less expensive. What used to require a $1 million investment in equipment can now be done 20 times faster for less than $20,000, thanks to PC clusters and other advancements.

“The gaming community drives the costs to a very reasonable level,” Oliver says. “Who would ever guess that we’d be using Wii remotes and balance boards for interfaces to do real work?”

In the design review stage, companies sometimes use powerwalls or fully immersive CAVE systems to see how an object will look in 3D, using head-mounted displays (HMDs). “You can have a multi-functional team in the same room,” Toledo says. “Car manufacturers and aircraft manufacturers like those environments, because they are very collaborative.”

Anytime, Anywhere Computing Dubbed SixthSense, the system uses an off-the-shelf digital projector and a Webcam to project images on to any surface and follow the user’s gestures. Both units are built into a small, wearable device connected to a mobile computer. The entire system cost just $350 to build. To take a digital photo, users snap their fingers. A drawing application allows users to draw on any surface by tracking their index fingers. The system also recognizes certain symbols to open applications—drawing a magnifying glass in the air with your fingers will open the map application, while drawing an @ symbol will open an e-mail program, for example. SixthSense also supports multi-touch and multi-user interaction. The system could potentially provide a higher degree of environmental interactivity for users. By “snapping a photo” of a book, for instance, users can search for reviews or price information online. Click here for more information. |

Although cost is still an obstacle, some lower-priced alternatives are now entering the market. Information can be transferred from a PC to an HDTV, for example, and viewed as a 3D image using stereoscopic glasses. Toledo says a company called RealD offers a product called the POD that can display images from a typical graphical card to an HDTV. Viewers can see the stereo 3D image by using the company’s glasses.

“You can use an HDTV for a fraction of the cost of a powerwall,” Toledo says. “You can have five or 10 people involved, and that lowers the cost and puts more companies in the game.”

Interacting with those models, however, is another matter. Most of these systems still rely on the mouse/keyboard combo—although Oliver notes that VRAC students are using iPhones as an interface in these full-scale, immersive environments.

And then there are touchscreen systems and motion-capture solutions that promise to provide greater interactivity at a slightly smaller scale.

Several years ago, Microsoft announced Surface, a multi-touch technology that allows users to manipulate content using gesture recognition. Because it uses cameras for input, users can utilize their hands as input devices, or even other non-digital objects like paint brushes on the tabletop’s acrylic display.

Surface failed to really take off, however, in part because it was expensive and bulky. Now, Microsoft has announced LightSpace, which takes the Surface concept further by projecting a display interface across an entire room, allowing users to “grab” a file or icon on one surface and drag it across the room to another area using their hands.

For design reviews or collaborative projects, technologies like LightSpace might provide less-costly alternatives to the CAVE or powerwall by combining vision, gesture and immersive computing.

As for commonly available touch interfaces, they can be used to manipulate existing CAD models and 3D prototypes, or to mark up drawings during review.

“You could use your finger or a stylus to create a geometry, and that may be faster than the keyboard/mouse combination,” Joung says. “We have tablets that you can ‘write’ on that way now, but we need to have interfaces in the software so that what you scribbled can be understood by a CAD application.”

But will designers use touch technology to develop digital models in the same way they use their hands to make clay models? Both touch and multi-touch have been demonstrated in a CAD environment. SpaceClaim, for example, announced multi-touch support for its 3D direct modeling software last year, adding gesture support that can replace toggle-key or keyboard shortcut commands. Last year, students at Cambridge University (working with SolidWorks) developed a touchscreen design application on the DiamondTouch table as part of a research project that allowed multi-user model construction without the benefit of a keyboard or mouse. But it remains to be seen whether designers will take their hands off the keyboard.

Sketching applications like Autodesk’s SketchBook Pro, which supports the iPad and iPhone, are already available. It seems, however, that touch may have more utility in the design review and simulation process. Siemens, for one, has demonstrated its NX product running on an iPad via a client/server model. “You can pull up the model, and drill into the ]product lifecycle management] data,” says Paul Brown, director of NX marketing at Siemens.

There are also opportunities in the mobile space, where small, touch-based phones could provide access to models for other applications.

“Right now, the key is getting access to data and repurposing it for other uses,” O’Malley says. “If you can pair up the 3D output with a mobile device for people doing things like working on the shop floor, that would be quite useful.”

Accessorizing in a Virtual World

Virtual environments employ a number of alternative interface technologies that allow users to interact with models in interesting ways, and VR-based gaming technologies have helped make these systems more accessible, easier to use and less expensive.

HMDs that provide stereoscopic 3D viewing are now much more comfortable to wear, and have improved graphics capabilities. Combined with wired gloves, these systems can allow users to manipulate models in real time.

Computers that Know What’s on Your Mind Over the summer, the chipmaker demonstrated software that uses brain scans to read minds. The software can determine with 90% accuracy which of two words a person is thinking by analyzing which parts of the brain are being activated while the subject concentrates on a specific word. Right now, the system can only distinguish a limited number of words, and relies on a massive (and expensive) MRI scan to accomplish the task. The hope is that the technology can evolve and be used to help disabled people communicate, for example. Intel joins Honda and the U.S. Department of Defense in the quest to develop thought-controlled computing technology. Honda’s Brain Machine Interface (BMI) is a helmet that uses electroencephalography (EEG) technology and near-infrared spectroscopy to control robots using thought alone. Users concentrate on moving one of four pre-determined body parts, and the BMI system measures changes in their brain waves and cerebral blood flow. This information then triggers one of Honda’s ASIMO robots to make the corresponding movement, such as raising an arm or a leg. The Army, meanwhile, awarded a $4 million contract to researchers at the University of California at Irvine, Carnegie Mellon and the University of Maryland to help develop a helmet that would allow soldiers to communicate with each other using their minds. Called “synthetic telepathy,” the process would rely on a network of sensors that would allow direct mental control of military systems. Initially, the Army hopes to be able to capture brain waves that can be translated into audible radio messages, providing a silent method for troops to communicate with each other. As is often the case, though, the first neurotech-based interface has come from the gaming community. NeuroSky released an EEG-based controller called MindSet in 2007, and is following up with a device called MindWave; OCZ Industries entered the market with a Neural Impulse Actuator in 2008, and Emotiv has also released EPOC, an EEG-based gaming headset the company claims will make it possible for users to control video games using their brain waves and facial expressions. |

“You can sense depth in the model and see what’s there, instead of an abstract overlay or a 2D representation,” Miller says. “That extra level of depth gives you insight into the model that normally would take multiple views. You can see it more quickly, and it’s a more natural way to work.”

Canon has done a significant amount of work on HMDs for virtual and augmented reality (in Canon’s lexicon, mixed reality) applications, and showed off its headgear at the Canon EXPO in September. Canon’s equipment is in use in Japan, where the National Museum of Nature and Science has launched a virtual dinosaur exhibit. The company also uses the technology in its own design processes.

“They’ve been quite focused on this idea of being able to mix virtual design with the physical environment to see how it all works,” Brown says.

Haptics is another emerging (but somewhat expensive) interface possibility. At the VRAC, researchers are using haptics to simulate the manual assembly processes used by many large manufacturers. “It’s a major effort to lay out a new line, so by assessing the ergonomics and feasibility of doing a particular process virtually, there are many issues that can be resolved digitally in the manufacturing process,” Oliver says.

A number of other companies offer gloves for motion-capture applications and gaming, but haptic gloves that provide tactile and force feedback are also available. CyberGlove Systems, for example, offers exoskeleton-style equipment that can provide force and tactile feedback either as an add-on to its motion-capture gloves, or as complete armatures. The company supports Autodesk applications, and recently formed a partnership with haptic hardware and software provider HAPTION to provide real-time physics calculations for manipulation within a VR or CAD environment. HAPTION’s support will enable the use of the CyberGlove with Dassault Systèmes’ CATIA, Delmia, Virtools and SolidWorks systems.

VR has greatly improved the ability to evaluate and simulate objects and real-world environments, but augmented reality may prove to have even more utility for manufacturers and designers. In VR scenarios, users typically strap on a head-mounted display to see the virtual environment. “The problem with that is you essentially disassociate the users from the rest of their body,” the VRAC’s Oliver says.

With augmented reality, virtual elements are added to a real environment, typically by adding virtual objects to a photo or adding a 3D image into a real space via an HMD. In design, that could include adding a CAD model to a real piece of equipment to see how exactly how it would fit into a natural environment. One manufacturer the VRAC is working with, for example, wants to use a display on the assembly line showing a video feed of the employees’ hands and with which components they are working. A “ghost” of the next part to be added can be placed in the image of the actual part, replacing the written workflow instructions currently used on the line.

InfiniteZ offers a visualization system called zSpace that allows users to interact with digital objects using natural movements. The system consists of head-tracking software, a stylus, glasses and a stereoscopic projector. Canon’s mixed-reality project also falls into this category.

“There is a lot of opportunity with large structural work,” Brown says. “Things like ships or planes—large infrastructure where there is a lot of piping and tubing, and you’re trying to visualize how a new system would fit in amongst all of the existing infrastructure. How do you maintain the new equipment and remove it? Being able to do that without having to have special lighting conditions will be key.”

Barriers to adoption remain

Other research is under way that could incorporate gaming-style motion control and eye tracking into business applications. Even further out is the work being done on computers that could potentially respond to human brainwaves (see “Computers that Know What’s on Your Mind,” on the previous page).

Oliver says there is also work under way to improve navigation within virtual environments like CAVEs.

“One of the hardest things to simulate is ambulatory capability, the idea of walking around a facility,” he says. “If you are in a CAVE, you can walk in a square, but if you’re designing a building, you want to keep going to the next room.”

There are methods being developed to allow that type of motion—using omni-directional treadmills, for example.

But even for the technology currently available, hardware complexity and cost have kept many new interfaces out of the mainstream.

“The visualization systems are still costly,” the NIAR’s Toledo says. “That cost has dropped in the past few years, but it’s still high enough to be a barrier.”

The most likely technologies to gain ground in design are touchscreen/multi-touch systems, as well as more streamlined HMDs and other VR tools that can be used for prototyping and simulations.

The bottom line is that for any new interface to gain acceptance, there has to be a clear productivity benefit, the ability to enable new applications, or both.

“The ability to use design data in other contexts is a great thing,” DS SolidWorks’ O’Malley says. “People who buy CAD systems spend more money on their data than on their CAD software. If that data can be accessed through other devices, then rather than being locked up for the designers, it could be used for other things like marketing or assembly.”

Of course, without a clear business case, some exotic new interface devices will remain on the toy shelf rather than the digital toolbox.

“Some things look really cool, but they are not financially viable,” Brown adds. “They may look great, but they’re a solution waiting for a problem.”

More Info:

Canon

CyberGlove Systems

NIAR Virtual Reality Center

NVIDIA

PTC

RealD

Siemens

SolidWorks

VRAC

Brian Albright is a freelance journalist based in Columbus, OH. He is the former managing editor of Frontline Solutions magazine, and has been writing about technology topics for 14 years.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Brian Albright is the editorial director of Digital Engineering. Contact him at [email protected].

Follow DE