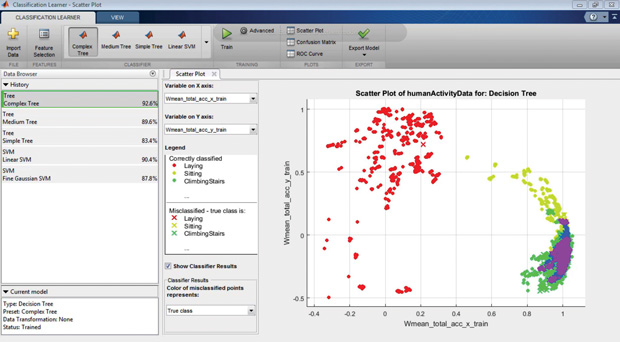

The Classification Learner app makes it easy to train models using supervised machine learning, and to export classification models to the MATLAB workspace. Image courtesy of MathWorks.

December 1, 2016

As the Internet of Things (IoT) makes its way from talk to action, the emphasis is shifting away from connectivity to data analytics, giving rise to new design paradigms and workflows that seem outside the scope of mainstream engineering.

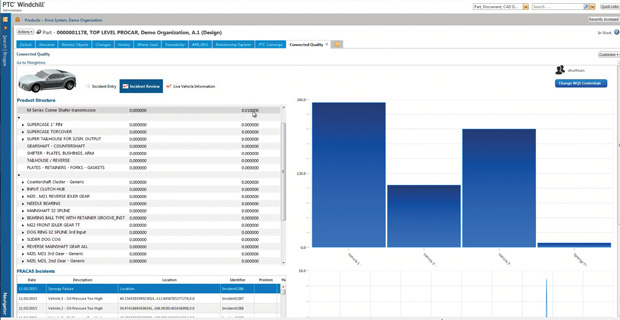

By way of an embedded ThingWorx mashup, design engineers can tap into data on incident and part failure rates on a fleet of cars to improve the quality of future vehicles. Image courtesy of PTC.

By way of an embedded ThingWorx mashup, design engineers can tap into data on incident and part failure rates on a fleet of cars to improve the quality of future vehicles. Image courtesy of PTC.IoT, coupled with the general trend toward digital business transformation, is spawning a new data economy where make-or-break success is based on the ability to spin a data deluge into golden insights that will direct decision-making throughout all areas of the business. This so-called Big Data wave is empowering companies in a variety of ways, from targeting the right product to the right audience at the exact right moment to drive sales, to feeding supply chains with real-time intelligence that alters logistics or sourcing strategies for operational and cost efficiencies.

The data economy’s latest target is product development, where it promises to open up a treasure trove of insights that if channeled properly, can help companies more effectively design, manufacture and service products. While engineers have long had access to simulation data and customer requirements specific to an evolving product design, they’ve lacked real, quantitative information about how a product is used and performs in the field other than anecdotal feedback or in some cases, information gleaned from the warranty process.

“The whole idea behind smart, connected products is before you were blind and now you’re not,” explains Michael Risse, vice president and CMO at Seeq, a platform for industrial process analytics. “The goal is to inform the design engineer so they can make better products based on actual customer data.”

Analytics Early Days

The emergence of IoT analytics capabilities, coupled with the concept of a digital twin, seeks to give engineers insights that go beyond marketing input or ad hoc customer feedback to affect the outcome and design of future products. It’s still early days, however. According to a Forrester Research study commissioned by Xively, 51% of companies are actively collecting data from connected products, but only 33% are currently using it to create actionable insights.

The most oft-cited use case for IoT analytics is for predicting part failure and eventually for prescriptive maintenance practices that help avoid potential problems and product downtime in the first place. PTC is one of the foremost champions of IoT and predictive service applications with its ThingWorx platform, which collects data from products and industrial plant floor equipment, employing analytics and machine learning capabilities to detect and identify what is regular behavior or a worrisome anomaly, explains Graham Birch, senior director of Solution Management at PTC.

While service professionals can leverage those insights to correct a flow problem with an industrial pump valve before it occurs, for example, that same anomaly detection has real ramifications for design engineers in the context of a digital twin, he explains. Correlating that information with data on operating temperature or rotational speeds and presenting it in the context of a digital twin could result in a possible design change that could circumvent the maintenance issue in the first place, he explains.“The correlation between failure of certain subsystems and particular operating conditions is something a design engineer never got before,” he explains. “Typically, they’d have to wait until something breaks and then figure out the design failure.”

Similarly, understanding product usage as captured by a digital twin can affect design in other ways. Take the example of a washing machine designed with 25 cycles, but in the real world, the digital twin confirms that only five cycles are regularly used. With direct feedback into how the product is operating, engineers could redesign the user experience in a subsequent model with a simpler way to switch between the most often-used cycles. In another example, an antenna with a high failure rate is found to be in close proximity to a motor, and it turns out the vibrations are causing a problem. This insight would enable the engineering team to make a simple correction—changing the placement of the antenna—instead of launching into a full-scale redesign, which is costly and time consuming.

“Typically, engineers make assumptions about how a product will operate, but when you actually get measurements back from the field, you find it isn’t meeting the original operating criteria,” says Francois Lamy, PTC’s vice president of Solution Management. “If you understand product usage, you might change the design and save money by paring down a part or not building for such extreme conditions. You could also reduce costs by redesigning a product for manufacturing.”

Understanding potential failure modes not only helps direct future product features, but it can aid in designing a product for prescriptive maintenance. Through use of a digital twin, for example, an engineering team could be clued into a usage pattern that degrades performance over time, but doesn’t necessarily equate to high failure rates. “Having deeper insight into when and why this is happening would enable engineers to explore the impact of different maintenance schedules like changing a lubricant every six months instead of annually,” says Ravi Shankar, director for Product Marketing in the simulation and test business segment at Siemens PLM Software.

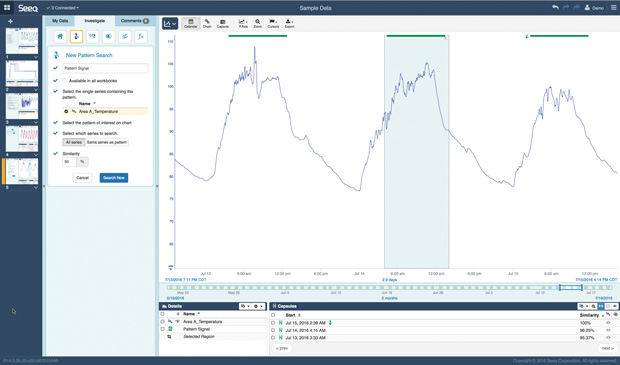

With Seeq, design engineers can monitor data coming from plant assets and combine it with other data like customer or quality information to practice prescriptive maintenance and maximize uptime. Image courtesy of Seeq.

With Seeq, design engineers can monitor data coming from plant assets and combine it with other data like customer or quality information to practice prescriptive maintenance and maximize uptime. Image courtesy of Seeq.IoT data can also play a key role in modifying manufacturing practices to garner efficiencies and boost quality. Consider Airbus’ Factory of the Future vision, in which it’s creating a series of smart tools that understand operator actions and make automatic adjustments aimed at simplifying the task. Using National Instruments’ System On Module (SOM) and the CompactRIO real-time embedded industrial controller, Airbus is creating new drilling, measurement, and tightening tools designed to eliminate manual processes and human error. For instance, its smart tightening tool will understand which task an operator is performing and will automatically adjust to the required torque and record the outcome in a central database as part of automated tracking.

“This helps eliminate human error, reduce time and provides more data on the structure and how it was built,” notes Brett Burger, principal marketing manager, Monitoring Solutions at National Instruments.

Going further, data captured on how long it takes to install the different fasteners or if a particular type of fastener regularly encounters problems can be funneled back to the design environment and eventually be evaluated for consideration in subsequent designs. To facilitate the capture of this kind of data, NI is partnering with IBM and SparkCognition on the Condition Monitoring and Predictive Maintenance Testbed to help identify machine failures and reduce maintenance costs for heavy machinery, power generation and process manufacturing assets.

The Tools You Know

Design tool vendors acknowledge that getting engineers to feel comfortable with IoT data analytics is a big task. That’s why most are evolving existing CAD, simulation, PLM (product lifecycle management) and related tools to address some level of IoT data analytics without necessarily requiring engineers to learn a new environment or reinvent themselves as data scientists.

MathWorks is positioning MATLAB in that way, enhancing its toolset to help engineers leverage such capabilities as machine learning as part of an IoT analytics effort. MATLAB’s apps and functions, including the Classification Learner, are designed to guide engineers through the process of applying machine learning, and the latest 2016b release expands capabilities and simplifies working with Big Data. The 2016b release includes support for tall arrays for working with out-of-memory data using familiar MATLAB functions and syntax instead of having to learn new big data tools. “We’re trying to demystify this and make it easy to get up and running within minutes with pretty sophisticated tools,” says Paul Pilotte, MathWorks’ technical marketing manager.

The Classification Learner app makes it easy to train models using supervised machine learning, and to export classification models to the MATLAB workspace. Image courtesy of MathWorks.

The Classification Learner app makes it easy to train models using supervised machine learning, and to export classification models to the MATLAB workspace. Image courtesy of MathWorks.SOLIDWORKS plans to leverage parent company Dassault Systèmes’ technologies like EXALEAD and Netvibes to bring analytics capabilities to its CAD and simulation toolset as well as by working with an ecosystem of third-party providers, according to Lou Feinstein, the firm’s senior manager of portfolio management. At the same time, it’s working with customers to revamp its existing tools so that data collected by sensored products in the field can be looped back into simulations to pinpoint abnormalities and provide more in-depth analysis of failure modes, he says.

The challenge is not to have feast or famine with these new data-driven design workflows. “You really have to understand the business process and the design so you can properly deploy the right set of sensors so you don’t end up with a Big Data problem,” Feinstein says. “Getting huge amounts of data can become a real problem in terms of how to manipulate the design so it can be impactful to the business.”

Beyond understanding how to instrument products for IoT analytics, engineering organizations will also have to adopt more of the agile practices associated with software development if they want to fully capitalize on data-driven design and keep pace with rapid change, experts say. In the new world of smart, connected products, engineers need to be able to act quickly, pushing products out the door even when every feature is not complete and issuing updates and improvements based on the real-time insights captured via software, not hardware rebuilds, says Ryan Lester, director of IoT Strategy for Xively, an IoT platform provider.

“In the hardware world, it’s all about getting product to market so people can buy it and use it,” Lester says. “Now that it’s connected, it’s about deploying new features via firmware,” he explains, citing car company Tesla as an example, which uses such an approach to upgrade its cars with new features.

As engineering organizations develop greater ease with IoT and analytics, the real value will come when the digital twin concept evolves past the representation of a single asset into an amalgamation of fleet data that can improve next generation designs, notes Marc-Thomas Schmidt, chief architect of the GE Digital’s Predix cloud platform and the former chief architect of IBM Watson. As an example, he cites a wind farm comprised of 100 wind turbines, all manufactured the same way, with the same materials, but placed in different places, which causes variation in how they capture wind surfaces and achieve different results.

“Design engineers can glean some interesting clues from observing the behavior of actual things designed in the real world and comparing the behavior of many of them,” notes Schmidt. “If you take that data and mix in weather information and geospatial data, it can help engineers spot patterns of behavior that would be impossible to do if you were just simulating in a lab.”

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE