GPU Splitting Leads to Lighter, Nimbler VDI

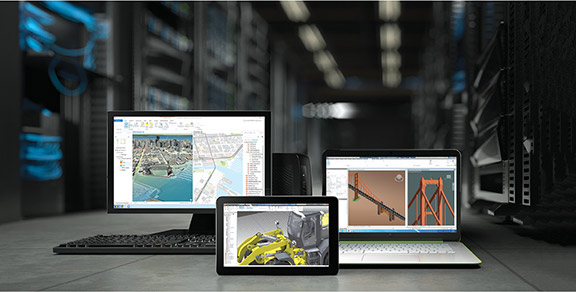

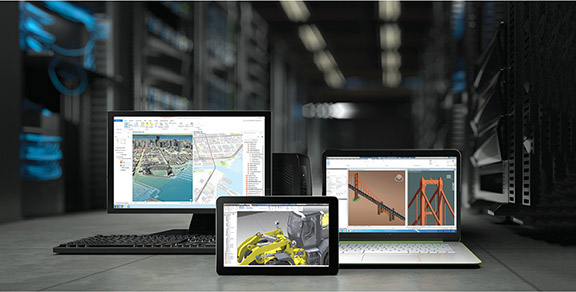

NVIDIA’s GPU-accelerated VDI allows engineers to access virtual workstations configured to match their physical workstations, but they can use lightweight devices as access points.

Latest News

July 1, 2017

Masashi Okubo, assistant chief engineer of Honda R&D’s Computer Integrated Systems (CIS) department, estimates he would need 112 server racks to support the roughly 1,000 virtual workstations the engineers needed.

“What would the facility manager say if I ask him to prepare a data center with 112 server racks?” he asks rhetorically, while recounting the project at the recent NVIDIA GPU Technology Conference (GTC, May 2017, San Jose, CA). “Do you think he would say: ‘Sure, it’ll be my pleasure’? Actually, he would say: ‘Are you serious?’”

Between 2013 and 2015, Honda R&D gradually moved its workforce from physical engineering workstations to virtual ones as part of its migration to virtual desktop infrastructure (VDI). That was Phase I. But by 2016, resource flexibility had become an issue. For the next phase of its VDI journey, Honda R&D needed a lighter, nimbler kind of VDI.

NVIDIA’s GPU-accelerated VDI allows engineers to access virtual workstations configured to match their physical workstations, but they can use lightweight devices as access points.

NVIDIA’s GPU-accelerated VDI allows engineers to access virtual workstations configured to match their physical workstations, but they can use lightweight devices as access points.“How do we expand the VDI to a greater variety of people while maintaining efficiency? That was the beginning of our challenge for Phase II,” says Okubo.

Phase I: Moving Away from Physical Workstations

At the heart of Honda R&D’s design workflow are its engineering workstations. They play an important role in the carmaker’s styling and computer graphics rendering, and in running graphics-intensive computer-aided design and engineering applications.

The first incarnation of Honda R&D’s VDI was deployed on VMware’s platform. The setup assigned one physical GPU to each virtual machine (VM). In other words, the data center needed to host the same number of GPUs as the number of VMs it was supporting.

“In that setup, it was easy to modify the CPU and memory allocation, but not possible to do that with the GPU,” says Yuma Takahashi, Honda R&D’s CAD administrator for CIS.

With the introduction of GPU virtualization in its GRID technology, NVIDIA made it possible for multiple users to share a single GPU’s processing power.

With the introduction of GPU virtualization in its GRID technology, NVIDIA made it possible for multiple users to share a single GPU’s processing power.The setup ensures that each VM has full access to the graphics processing capacity of a dedicated GPU. However, an internal study conducted on the engineers’ computer resource usage revealed that the one-GPU-per-VM setup was not necessarily the best approach.

Studying the logged CPU, GPU and memory usages of the VM user pool, the team identified three distinct user types:

1. light users, who use the VMs mostly for CAD viewing;

2. power users, who use the VMs for CAD modeling; and

3. high-end users, who use the VMs for digital prototyping and CAE analysis.

Each class of users has different CPU, GPU and memory needs. In the one-GPU-per-VM setup, many light users have more resources than they need, whereas some power users and many high-end users struggle with the resources given. Takahashi and her team came to realize the solution may be GPU virtualization, which makes it possible to reassign those resources on the fly.

“With GPU virtualization, we can make more flexible configuration changes to the VMs,” Takahashi says. “So, if there are unused resources, we can choose to reassign them to those who are short of resources.”

GPU Virtualization

GPU virtualization is possible with some GPU-powered VDIs, like the NVIDIA GRID. The GPU maker first publicly revealed this possibility during its 2012 GTC, when it introduced the Kepler GPU architecture. GPU virtualization became a regular feature of subsequent NVIDIA GPU architectures, like Pascal, Maxwell, Fermi and Volta. With NVIDIA GRID, the vGPU (virtual GPU) manager sits between the server hardware and the Hypervisor that distributes resources to the VMs.

In a VDI with GPU pass-through, the power of the dedicated GPU is simply passed on to the VM; therefore, the GPU capacity distribution is fixed. In a VDI with virtual GPUs, the computing power of 2 or 4 GPUs may be redistributed among 8 or 16 VMs, for instance; therefore, the GPU resource allocation is much more flexible. “NVIDIA GRID lets up to 16 users share each physical GPU,” according to NVIDIA.

Honda R&D uses Dassault Systèmes CATIA software in its design workflow. Part of Dassault Systèmes’ 3DEXPERIENCE platform, the CATIA software suite includes a range of CAD modeling, collaboration, viewing and high-end visualization features. Although some functions like viewing can be accomplished with minimal CPU and GPU resources, real-time rendering features can be compute- and graphics-intensive. Therefore, the ability to split and reassign a physical GPU’s power among multiple users gives IT managers the option to assign resources to match the users’ behaviors and needs.

Phase II: GPU Redistribution

Based on the usage paradigms they saw in their internal study, Okubo and his colleagues decided on three classes of VMs.

- For light users, VMs with 2 virtual CPUs, 12GB RAM, M60-1Q vGPU Profile (a maximum of 16 users per host)

- For power users, VMs with 4 virtual CPUs, 24GB RAM, M60-2Q vGPU Profile (maximum of 8 users per host)

- For high-end users, VMs with 8 virtual CPUs, 48GB RAM, M60-4Q vGPU Profile (a maximum of 4 users per host)

Honda R&D uses HP Enterprise’s Apollo r2200 servers as hosts. Each host is equipped with two physical NVIDIA Tesla M60 GPUs.

“We asked CAD operators for feedback about the performance of the VMs compared to their physical workstations,” says Okubo. “Some operators commented that, with certain operations, the VDI performance was vastly superior, depending on the generation of the workstation involved.”

Expansion Plan

In addition to eliminating the problem of noise, heat and maintenance, NVIDIA GRID and optimization technology made it possible to improve resource efficiency by 20% to 40%, provide optimal resources per user application, and speed up R&D. Okubo, of course, did not need to request a server of 100+ racks from the facility manager. With NVIDIA GRID VDI, only seven racks were needed—a 94% reduction in bare metal hardware.

With each VM configured to match a workstation’s performance, Honda R&D engineers don’t need to be at their desk. They can remotely access their workspace from lightweight, mobile devices. “For endpoint access, we are using the laptops the users have been using for emails and Office work,” says Hiroshi Konno, Honda R&D’s assistant project leader, VDI development.

In the coming years, Honda plans to expand its VDI setup from its headquarters in Japan to its R&D centers worldwide, spanning Europe, China, India and America to Brazil.

More Info

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE