Hyperacuity Systems Uses LabVIEW for Vision

Computing that Will Change the World, Second Place: Enabling moving robots and other devices to see and understand their environment is a highly difficult problem for which Hyperacuity Systems has an innovative solution.

Latest News

December 1, 2009

By Peter Varhol

Professor Michael Wilcox, owner and principal of Hyperacuity Systems, has always been interested in vision and visual systems. A biologist and biophysicist by education, his early work in ophthalmology resulted in the development of a medical device that allows glaucoma to be treated without drugs.

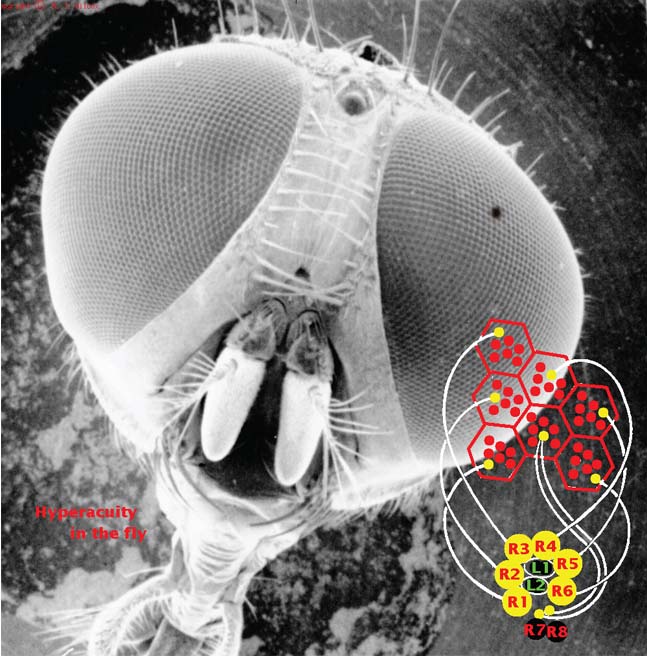

Studying the eyes of flies inspired Wilcox. |

Wilcox has applied his expertise in vision systems to designing and developing cutting-edge technology in that area. His company, Hyperacuity Systems, has developed an instantaneous sub-pixel resolution camera that records images in real time. It is the fastest known camera in the world. Interestingly, studying the eyes of flies inspired the creation of this camera, which is targeted to robotics and vision systems.

Wilcox noted that most computer vision systems today sample the visual space using cinematography; in other words, digital film. These devices use high spatial resolution at high frame rates to enable machines to “see” and interpret moving images.

But it’s not that easy. Most existing approaches require a great deal of computational horsepower, and result in a motion view that is not smooth and continuous. By the time a particular image has been interpreted in sequence, it may be too late to make the appropriate response.

Because Wilcox has the advantage of a deep understanding of human and animal vision systems, he has been able to apply some of those principles to creating an innovative machine vision system. Animal photoreceptors differ from the monolithic pixel profiles in a camera and use an optical, nonlinear Gaussian approach. Animals are then able to encode subpixel resolution, enabling the brain to interpret images with higher resolution as well as data reduction.

The Hyperacuity Systems solution consists of modular elements with analog processing to extract a feature set from objects in an image, as well as a comparator mesh that connects the dots between elements. The result is an instantaneous vector output of both object position and motion from each element in the array.

The implementation is a chip that can detect both position and displacement with hyperacuity, accuracy better than 1/10th the pixel size or spacing. In other words, this chip is able to extract and interpret more information from fewer pixels. To assist in the design, Wilcox and his team simulated larger arrays with National Instruments’ LabVIEW, which demonstrated the ability to do faster processing.

The analog circuitry uses a logarithmic compression algorithm to get a high dynamic range and variable working range as animals do, enabling machines to see more like animals or humans do. The new approach provides better parallax and stereo imagery with reduced power consumption and faster processing. It results in better image interpretation and a faster response by the machine. It also works well under low lighting and contrast conditions, because of low-voltage differential sensing from photoreceptor elements in the same cartridge.

Wilcox hopes that this type of camera system can be used in high-speed manufacturing environments where fast product inspection is critical, as well as new automotive applications in collision detection and avoidance. Because of the speed of interpretation, this system can likely be used in any device that requires detection and interpretation of moving images. The camera and machine vision system is still in development. Expected release is in 2011.

More Info:

National Instruments

Contributing Editor Peter Varhol covers the HPC and IT beat for DE. His expertise is software development, math systems, and systems management. You can reach him at [email protected].

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Peter VarholContributing Editor Peter Varhol covers the HPC and IT beat for Digital Engineering. His expertise is software development, math systems, and systems management. You can reach him at [email protected].

Follow DE