ISC Conference: Fast Growth in High-Performance Computing Despite Challenges

Latest News

July 9, 2018

The recent ISC Supercomputing Conference in Frankfurt presented attendees with a two-sided coin. Business is good for the high-performance computing (HPC) industry; costs continue to drop and new software tools increase HPC versatility. But there are some dark clouds on the near-term horizon. Hardware constraints and talent shortages threaten to slow the pace of computational growth. The leading vendors and research institutions are working to return to the days of ever-increasing computational power at ever-cheaper rates.

The Good News

HPC business is good because new software technologies like deep learning and artificial intelligence are finding answers to difficult problems faster than ever before. From fighting disease to designing more efficient aircraft or perfecting extreme weather forecasting, HPC computing continues to make a difference.

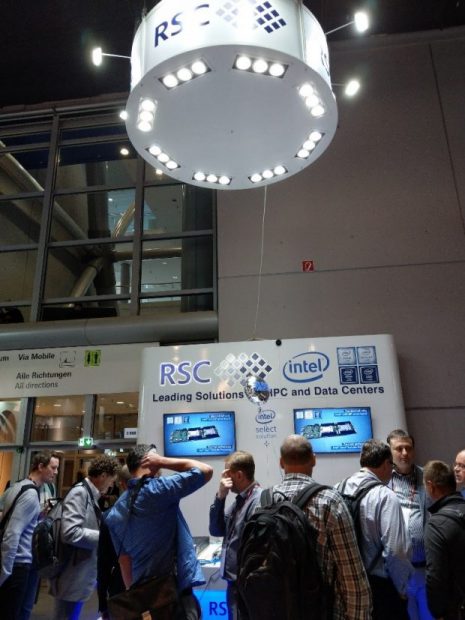

The RSC booth was busy throughout the ISC Supercomputing conference in Frankfurt, thanks to the release of their new HPC solution. The latest model in the RSC Tornado line of HPC modules uses new SSD storage and Optane memory drive technology. Image courtesy of RSC.

The RSC booth was busy throughout the ISC Supercomputing conference in Frankfurt, thanks to the release of their new HPC solution. The latest model in the RSC Tornado line of HPC modules uses new SSD storage and Optane memory drive technology. Image courtesy of RSC.The HPC market hit $24.3 billion in 2017, according to Hyperion Research. Strong growth will continue in the industrial HPC sector, Hyperion says, driven by notable increases in real-time results, the ability to frame more intelligent questions, and the explosion of data available. “2018 will be known as the year of machine learning for bounded problems” in manufacturing, says Hyperion’s Steve Conway.

The biggest challenge in the use of deep learning for manufacturing is the need for more algorithms. An example would be sorting by eye color. One algorithm can learn to recognize blue eyes, but another one is required to recognize brown eyes. The eye-specific algorithms are about bounded problems, while “industry wants unbounded questions,” says Conway. “This is not a hardware or software problem, it is an algorithm problem.” Having the right questions is not enough: “the data needed for deep learning is not always available,” which means industry will likely increase IoT spending to gather more data for machine learning analysis, and will pay top dollar for programmers who can create more versatile algorithms. Rod McAllister of Penguin Computing echos the need for more programmers who specialize in data analysis problems. “The biggest need in this industry is for data scientists, and the biggest competitor for talent is financial services.”

In general, there is a trend toward increased reliance on deep learning to solve the big problems. “In the past a job like jet engine airflow would start with an assumption, then iterate toward a solution,” notes Dr. Steve Scott, CTO of Cray Research. “Now they use deep neural net computing to get an approximation of the solution, then improve on it. It is a much faster path.”

This year Hyperion Research initiated coverage of quantum computing, based on the increase in client demand for more information. In 2018 Hyperion will “be looking for the interesting questions” by reaching out to the “limited expert pool” available. Conway says there might only be 1,000 quantum computing experts in the world. “We are seeking the elevator speeches of quantum computing.” Conway notes quantum computer “is still a science challenge, and not yet an engineering challenge.” Hyperion listed a set of initial use cases for quantum computing; design and manufacturing did not appear on the list.

HPC clouds are rapidly becoming an acceptable option, says Hyperion’s Bob Sorensen. Historically HPC has been strictly an on-premise technology, but new compute models are rapidly emerging. The use of private clouds for HPC is the fastest growing application, followed by hybrid public/private clouds and public-only clouds. Virtualized HPC clouds are starting to appear this year. Sixty-four percent of all HPC installations, across all application types, are running “something” in a public cloud, Sorensen says, while 7% of HPC users are using only public cloud. Any constraint in the market place is on the server side, Sorensen says; most public cloud providers are limited in the availability of HPC-specific resources. Hyperion believes by 2020 20% of HPC use will be exclusively in public cloud.

Reaching for Exascale

The race to exascale supercomputing is currently driving HPC R&D. Hyperion says the USA will spend $2 billion on exascale R&D in 2018, and estimates China will spend $1 billion. Exascale computers may not be in general availability until 2020, despite earlier predictions they would arrive in 2019. Intel CPUs are not increasing in throughput as fast as expected, and increased electricity use and the resulting increase in heat are slowing commercial exascale development.

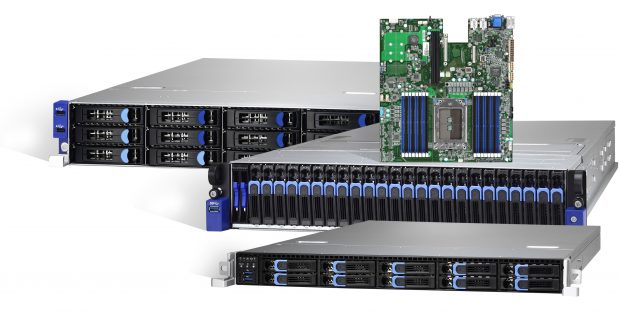

Tyan was one of several HPC hardware vendors to launch new server and storage solutions at ISC Supercomputing 2018 based on the AMD Epyc CPU. Image courtesy of Tyan.

Tyan was one of several HPC hardware vendors to launch new server and storage solutions at ISC Supercomputing 2018 based on the AMD Epyc CPU. Image courtesy of Tyan.Hyperion announced the key results from a new U.S. Department of Energy study it was commissioned to run, showing companies investing in HPC are generating substantial return on investment (ROI). Across all industries using HPC, an average of $463 is generated in additional revenue for every $1 invested in HPC. Profits or cost savings increase by $44 for every $1 of spending on HPC. The second-highest rated “primary innovation” from those using HPC was “better products,” exceeded only by “created new approach.”

New CPU Vendors Gain Market Share

For years Intel CPUs were the only player in x86-based CPUs for HPC. But in the past year AMD’s new Epyc CPU for servers has been spec’d in a variety of HPC cluster environments. AMD estimates it now has 2% of the HPC market. Vendors using AMD Epyc for ready-to-deploy HPC platforms include AMAX, Exxact, Inventec and Supermicro. AMD has poured considerable R&D effort into libraries for HPC applications and deep learning frameworks, including TensorFlow and Caffe. AMD also announced a major win for its HPC technology with the National Institute for Nuclear Physics in Italy, which will use the AMD Epyc 7351 processor to power a new high-performance computing cluster for theoretical and experimental research in subnuclear, nuclear and astroparticle physics.

ARM-based CPUs are also making an impact in HPC. “We are seeing growing interest from our customers, resellers and the HPC market in general for 64-bit ARM-based clusters,” says Bill Wagner, CEO of Bright Computing, which provides cluster management software. At ISC Bright Computing announced a partnership with Cavium which will ship Bright’s Cluster Manager with Cavium’s ThunderX2 ARM platform for HPC.

Cray’s Scott sees “anxiety and concern about CMOS technology.” Big users of HPC are starting to look at alternatives like increased use of GPU computing and ARM CPUs. “Many startups are looking for a Cambrian explosion of specialization at the processor/hardware level,” says Scott. “The hardware side of HPC is not keeping up; transistor size can’t be made much smaller,” and power requirements are increasing rapidly. AMD is gaining notice because its new Eypc CPU will soon be built using 7nm transistors and is rated for lower power consumption (resulting in less heat generation) than competing models from Intel.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Randall S. Newton is principal analyst at Consilia Vektor, covering engineering technology. He has been part of the computer graphics industry in a variety of roles since 1985.

Follow DE