Latest News

July 1, 2011

By Barbara G. Goode

The most exciting development in sensing for autonomous service robots “which is arguably the most exciting frontier in robotics “is a sensor that has only begun to be used in robotics and, in fact, was developed for a completely unrelated application. It is Microsoft’s Kinect for Xbox 360, which the computer giant describes as a “controller-free” interface for its video game platform (see Figure 1).

Figure 1: Just days after Microsoft released Kinect, the sensor was hacked

by code developers wanting to enable control through a PC rather than just the Xbox.

The Kinect enables control of the Xbox through “natural interaction” “a term trademarked by PrimeSense (Tel Aviv, Israel), which developed Kinect’s underlying optical sensing and recognition technology that translates body motion into control commands. As Microsoft explains, “the Kinect sensor has a 3D camera and a built-in microphone that tracks your full-body movements and responds to your voice.” What makes the sensor captivating is its price tag: $149.99.

“It’s the most exciting sensor to be released in a very long time,” says Bill Kennedy, co-founder of Amherst, NH-based MobileRobots, Inc., the maker of autonomous robot cores, bases and accessories that was acquired in 2010 by industrial automation and robotics multinational Adept Technology, Pleasanton, CA.

Fred Nikgohar, CEO of RoboDynamics, Santa Monica, CA, agrees.

“Kinect is a great example of what is possible now with sensors and embedded systems,” he says. “It’s not that we couldn’t do 10 years ago what Kinect does, but at $150 it is a radical game changer.”

That’s certainly true for service robots, defined by the International Federation of Robotics as those that operate semi- or fully autonomously to perform services useful to humans and equipment, excluding manufacturing. It is also likely true for other fields, as well.

Getting ‘Kinect-ed’

Industry watchers were surprised that Microsoft was seemingly unaware of the potential of Kinect for robotics applications “potential evidenced by the fact that days after Kinect’s release, it was hacked by code developers wanting to enable control of the sensor through a PC rather than just the Xbox. In response, Microsoft announced plans for a software development kit (SDK) that will allow exploration of Kinect for other applications. A non-commercial starter kit for Windows, which should be available by the time you read this, promises:

- Robust, high-performance capabilities for skeletal tracking of one or two persons moving within the Kinect field of view.

- Advanced audio capabilities provided by a four-element microphone array, with sophisticated noise and echo cancellation, beam formation to identify a sound source; and integration with the Windows speech recognition application programming interface (SAPI) also included.

- Access to a standard color camera stream, as well as depth data to indicate distance of an object from the Kinect camera, to enable development of novel interfaces.

At an unspecified date, Microsoft also plans to release a commercial version of the SDK that will include support and confer any intellectual property rights “which the non-commercial version will not. Microsoft is releasing no further details at this time.

Meanwhile, however, a number of others are working on Kinect-like products, says Conard Holton, editor-in-chief of Vision Systems Design. Among them is the Interface Ecology Lab at Texas A&M University, whose ZeroTouch sensor was covered recently by Time, among other publications.

Complex and Evolving

Robots employ a number of sensor types, including GPS navigation, radar, sonar and inertial guidance. However, “the majority of service robots require some sort of vision system,” says the Vision for Service Robots Report. According to the report, these range from simple 2D CMOS sensors to complex subsystems capable of 3D imaging and pattern recognition. The vision sensor technologies most often used in service robots are structured light and two-camera stereo systems, time-of-flight sensors, LIDAR, and single-lens camera systems.

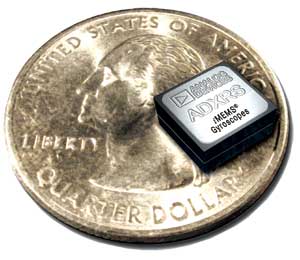

Figure 2: Even with sophistication offered by components such as Analog Devices’ high-performance, low-power iMEMS gyroscopes, dealing with the complexity of autonomous robotics is a challenge. |

While some of those options still run in the multiple thousands of dollars, MobileRobots’ Kennedy says on the whole, vision sensor pricing has dropped dramatically “and sensors themselves can be relatively easy to put together.

However, that doesn’t make mobile autonomous movement a simple endeavor.

“The vision software needed to understand these images and provide robotic feedback is complex and evolving rapidly,” notes the Vision for Service Robots Report. Kennedy explains that most of today’s autonomous mobile robots operate under constrained conditions. For instance, those that ferry supplies in a factory typically follow lines installed in the floor, and will stop if their paths become blocked. The lighting must be just right, too: Shadows will throw the robots off, as will overly bright light.

Gating Factors

“Designing navigation and stability systems requires extensive hardware and software knowledge,” maintains Howard Wisniowski, marketing manager for MEMS/Sensors Technology Group, Analog Devices (ADI), Norwood, MA. Today’s inertial measurement units (IMUs) are sophisticated enough to cover many bases. They may include a multi-axis (X, Y, Z) accelerometer, a multi-axis (X, Y, Z) gyroscope, and a multi-axis magnetometer (compass). See Figure 2. And yet, dealing simultaneously with multiple axes as well as angular (pitch, roll and yaw) and linear (up, down, sideways) motions while determining position, velocity, and heading in free space, “is complex and has many constantly changing variables that need to be interpreted correctly,” in the context of other variables that need to be understood, such as temperature compensation, offset and hermeticity, says Wisniowski.

Figure 3: RoboDynamics’ new Luna (shown here with company CEO Fred Nikgohar) is a 5 ft., 2 in. open-standard hardware platform featuring feature a native App Store, standard PC architecture, an open Linux-based operating system, touchscreen display, Wi-Fi and multiple USB ports for expandability. |

Kennedy says, “even the simplest of tasks, like driving from one place to another in a cluttered, dynamic, human-occupied environment, requires a lot of robotic sophistication “lots of sensors and lots of processing for the very sophisticated fast-acting software. Only when the price of all that hardware comes way, way down will robots become more ubiquitously deployed around people.”

The Kinect is a step in the right direction, though the field has a long way to go, and Kennedy points out a major stumbling block: liability. Just as commercial airplanes have to be far safer than cars, the safety issue implies a cost that is “much more than people are willing to pay to have a robot in their midst.”

Help Wanted

Wisniowski explains that while design tools such as evaluation boards and software development platforms are available through component suppliers and third-party specialists, they are not simple to use “and developing the algorithms that the microprocessor can use and that fit the application is another challenge. Indeed, practical algorithms for interpreting sensor measurements/readings is a major factor holding back the growth of robotics, says Teddy Yap, Jr. of Washington State University, whose doctoral dissertation was titled “Mobile Robot Navigation with Low-Cost Sensors.”

In addition to less expensive, more sophisticated sensors and processors, Kennedy’s list of things necessary for design engineers need to take consumer robotics from a niche to mainstream includes better tools for non-programmers and training. He notes that MobileRobots offers training, but the demand is limited.

Training is provided via downloadable PDFs on Freescale’s website to support its new 9-in.-tall bipedal sensor robot and development kit that the company launched in May for $199. The walking robot has four degrees of freedom, a 32-bit “brain,” and a three-axis accelerometer for balance, and it offers a choice of easy-to-use scripting languages.

|

Platform Approaches RoboDynamics CEO Fred Nikgohar points to what he sees as three fundamental problems that hamper the robotics industry: 1. Fragmentation. Nikgohar says that every company works independently, lacking standards, unification or collaboration. This prevents the development of economies of scale. 2. A dearth of affordable, useful platforms. This severely limits market incentives required for innovation and, by extension, to create a diverse ecosystem of adopters and innovators. 3. Lack of designs that will engage people emotionally. On May 11, 2011, RoboDynamics made a move intended to overcome those limitations: The company’s new Luna is a sleek, 5-ft., 2-in. open-standard hardware platform (with an 8-in. touchscreen LCD for a face) designed to enable robotics innovation (see Figure 3). “We’ve made the proper tradeoffs to get it to a consumer-level price,” explains Nikgohar, adding that 1,000 limited-edition Luna robots will begin shipping during the fourth quarter of 2011 for $3,000 each. Luna will feature a native App Store, standard PC architecture, an open Linux-based operating system, touchscreen display, Wi-Fi and multiple USB ports for expandability. “Our objective is to aggressively remove cost and complexity, thereby facilitating widespread consumer adoption while simultaneously providing a unique ground-floor opportunity for the developer community to bring innovative ideas to a financially viable robotics ecosystem,” says Nikgohar. Other developers “including Willow Garage, based in Menlo Park, CA, and MIT’s Bilibot Project “are also providing affordable, open-source robotics platforms to encourage innovation. In fact, the MIT team is currently offering a competition with a rebate of up to $350 on the purchase of a Bilibot (which, by the way, is built on Kinect) for creating and sharing an open-source application. If you’ve been thinking about trying your hand at robotics, this might provide just the incentive to start. Starting now is probably necessary if Nikgohar is to achieve his goal of “A robot in every home in 10 years.” |

Barbara G. Goode served as editor-in-chief for Sensors magazine for nine years, and currently holds the same position at BioOptics World, which covers optics and photonics for life science applications. Contact her via [email protected].

For more information:

Adept Technology

Analog Devices, Inc.

Freescale

International Federation of Robotics

Microsoft

MIT’s Bilibot Project

MobileRobots, Inc.

PrimeSense

RoboDynamics

Texas A&M University’s Interface Ecology Lab

SICK USA

Vision Systems Design

Willow Garage

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

DE’s editors contribute news and new product announcements to Digital Engineering.

Press releases may be sent to them via [email protected].