Latest News

April 10, 2013

In 1961, when John F. Kennedy publicly vowed to land a man on the moon and return him safely to the Earth within a decade, he spurred an era of space exploration. Seeing Russia launching Sputnik 1 satellite into space four years earlier probably also lit a fire under the American scientific community’s feet. In 1969, six years after Kennedy’s death, Neil Armstrong and Buzz Aldrin stepped out of Apollo 11, becoming the first humans to set foot on the moon.

Today, the $30 million Google Lunar X Prize (GLXP) is fueling a new era of space exploration, characterized by friendly competition among teams around the world. Past space endeavors were primarily funded by governments and national institutions. The GLXP, on the other hand, is designed to inspire privately funded groups. To win the prize, a team must safely land a robot on the surface of the moon, have that robot travel 500 meters over the lunar surface, and send video, images and data back to the Earth. Launched in 2007, GLXP started with 33 teams. Since then, some teams merged and others withdrew, reducing the pool to its current 23.

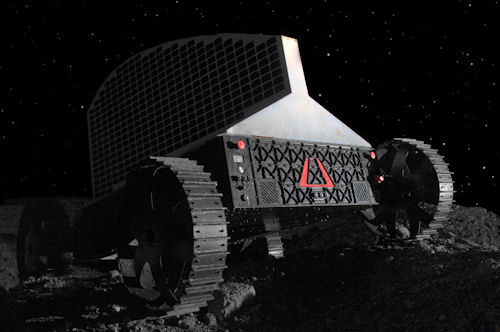

A rendering of Astrobotic’s lunar rover robot, named Polaris. The robot’s surface operations are carefully preplanned to maintain unobstructed views of the sun for power and Earth for communication. Astrobotic uses software-driven simulation to test out Polaris’s autonomous electromechanical operations. Image courtesy of Astrobotic |

Among the remaining teams is Pittsburgh-based Astrobotic, an offshoot of Carnegie Mellon University’s Robotics Institute. The firm relies heavily on software-driven simulation to perfect the electromechanical behaviors of its space robots. Though more cost-effective than physical testing, digital simulation is also a time-consuming process, especially when driven by slower, older systems. Furthermore, when a workstation is engaged in digital simulation, the intense computation hogs its memory and CPU resources. The system inevitably turns into what Astrobotic’s CIO Jason Calaiaro calls a “dead node”—no longer available for anyone.

To win the GLXP, the remaining teams are racing against one another to be the first to pull off the mission. The contest, therefore, is also a race against time. For Astrobotic, speeding up simulation is the best strategy. But what else could the engineers possibly do when simulation programs were routinely exhausting the CPU power in their systems? The answer turned out to be GPU acceleration.

Go to the Moon for Less

![]()

A government-led moon landing endeavor today would cost about $800 million, estimates Calaiaro. “Our objective is to do that for an order of magnitude less,” he reveals. “We’ve developed breakthrough technologies to do that.”

The Astrobotic GLXP team is led by William “Red” Whittaker, Ph.D., CEO of the company. Whittaker’s got quite a number of robotic missions under his belt. His past projects include Dante’s volcano descent, Nomad’s desert trek, Ambler’s traverse of rock fields, Hyperion’s sun synchronous navigation, and the Antarctic meteorite search.

Astrobotic envisions executing the lunar mission with two robots: Lander and Rover. “Our Lander delivers 500 lb. to the surface of the moon,” explains Calaiaro. “Our Rover departs our Lander once it touches the ground, and then explores that environment in high-definition videos and sends them back to Earth, just about in real-time.” The first mission is set for 2015.

Astrobotic has constructed physical prototypes of its Lander and Rover robots. They are deployed from time to time to conduct physical tests. But to test the entire launch-to-landing process in the physical world—and repeat it many times until it’s flawless—is impractical because of the time, cost and effort required.

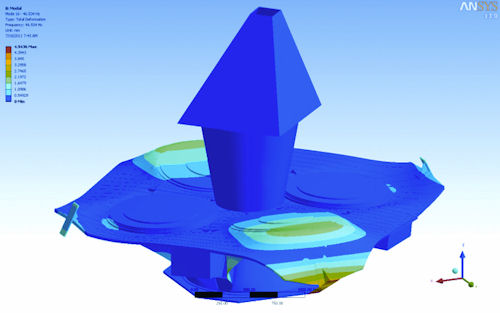

As an alternative, the company relies primarily on software-driven simulation. It involves subjecting CAD models of the robots created in SolidWorks’ mechanical design program to the anticipated stresses and loads in a virtual environment, using ANSYS and MathWorks’ MATLAB software.

Going to the Moon from a Computer

Calaiaro refers to end-to-end, hardware-in-the-loop (HIL) simulation “the Holy Grail of simulation.”

“We’ll simulate every detail of the spacecraft’s environment, from the moment it departs the rocket to the moment it touches down on the surface of the moon,” he adds. “The environmental part of that simulation—where the spacecraft is pointing, the location of the stars, where it is in a particular orbit around the moon, what the cameras are looking at—we’ll simulate all of that. All of these are running either on engineers’ machines or on a server.”

The trouble was, when simulation was running at full speed, the three-year-old workstations at Astrobotic were completely consumed by the intensity of the jobs. Engineers and designers could no longer use these workstations for any other tasks—not even something as trivial as composing documents, checking emails or surfing the web. Some jobs could take as long as 10 hours, essentially the equivalent of an engineers’ entire workday.

So Calaiaro and his team chose two methods to deal with the dead-node problem. Whenever possible, they simplified the simulation exercise to keep the processing time short. This was usually done by reducing the mesh count, or degrees of freedom (DOF) in the model, to half a million or less. They also resorted to scheduling jobs to occur after hours or over the weekend so they wouldn’t interfere with the staff’s day-to-day computing workload.

Both fixes came with drawbacks. Simplifying the job meant settling for an approximation rather than an accurate answer. Scheduling jobs to run overnight meant that, if a job had been incorrectly set up, the staff wouldn’t find out until the next morning.

Then Astrobotic was introduced to GPU acceleration, courtesy of HP and NVIDIA. The company used a HP Z800 workstation, equipped with NVIDIA’s dual-GPU Maximus architecture. With 12 Intel Xeon CPU cores, a NVIDIA Quadro GPU, and a NVIDIA Tesla GPU, the workstation was equipped with far more computing and visualization horsepower than to what Calaiaro and his engineers were accustomed. Perhaps most important, the system had sufficient power to facilitate a CAD program and a simulation program, both at the same time. The system didn’t slow to a crawl when it was engaged in ANSYS simulation jobs; it remained active to perform other tasks. That was the beginning of the end of dead nodes.

GPU-Acceleration of Simulation

Standard workstations are equipped with a single GPU. The HP Z800 workstation with NVIDIA Maximus technology, on the other hand, has an additional GPU, a NVIDIA Tesla unit. The extra GPU becomes a dedicated parallel processor for compute-heavy simulation jobs.

To take advantage of GPU acceleration, the software must be written to parallelize computing jobs on the GPU. As it happens, Astrobotic’s primary simulation software, ANSYS, is written to take advantage of GPU acceleration. When it announced GPU support for the first time in September 2010, ANSYS stated, “Performance benchmarks demonstrate that using the latest NVIDIA Tesla GPUs in conjunction with a quad-core processor can cut overall turnaround time in half on typical workloads, when compared to running solely on the quad-core processor. GPUs contain hundreds of cores and high potential for computational throughput, which can be leveraged to deliver significant speedups.”

Simulation of Astrobotic’s robots occurs primarily in a software-driven environment. Shown here are ANSYS simulation results of a module. With an enhanced GPU-powered HP Z800 workstation, Astrobotic expects to do something previously unthinkable—simulating the entire launch-to-landing sequence from a desktop system. Image courtesy of Astrobotic |

“The NVIDIA Maximus-powered system is like getting three people’s worth of use on a single machine,” observes Calaiaro. “This system is a beast. We haven’t yet found anything it can’t handle—even simultaneous CAD, analysis and additional number-crunching in remote rendering jobs.”

With the new HP workstation, Calaiaro saw significant speedup when running jobs involving 1.5 million DOF or more. “Now we can do complete analyses on our lander that runs 2 million to 3 million DOF, which means we can refine and test our models more completely and in less time,” he notes.

To gain an even greater power boost, Astrobotic is also refining its own in-house software code to support GPU. In addition, Calaiaro is planning to augment his current Maximus setup with one more NVIDIA Tesla GPU. This would give the already-powerful workstation not one, but two NVIDIA Tesla GPUs, dedicated to simulation jobs. The setup would allow Calaiaro to do something previously unthinkable: Simulating the entire launch-to-landing sequence from a desktop system.

Design and Simulation, Side by Side

Previously, Astrobotic engineers employed a workflow that kept design and simulation in separate cycles because compute-intense simulation runs interfered with normal design work on workstations. But the new Maximus architecture is prompting engineers to rethink such a strategy.

“Analysis informs design,” Calaiaro points out. “The ability to run analysis at will, not having to wait to do it at night, not having to schedule it for a particular time—that means you’ll end up with a more informed design.”

A workflow that facilitates design and simulation in parallel may not exactly be a new idea, but it certainly is “the way we should work,” he adds.

More Info

For more information on this topic, visit deskeng.com.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE