On-Demand Computing with Altair’s PBS Works

Tapping into private and public high performance computing systems.

Latest News

October 1, 2010

By Kenneth Wong

Network computing was much simpler in the ‘90s, when most computers—desktops, laptops, and servers—came with single-core processors. In general, each node—usually identified as a single physical box in the network—came with a single computing core. Now, with multi-core processors becoming the norm in the consumer market as well as professional market, a single node could comprise as many as 12 computing cores.

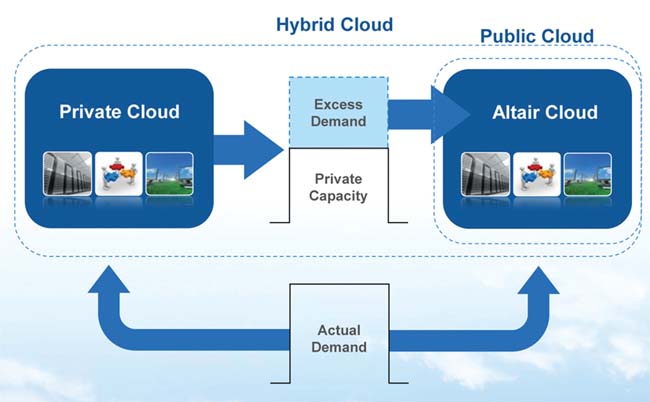

As explained in this diagram, when a user feels his/her local resources are no longer enough, he/she has the option to tap into Altair’s cloud-hosted HPC environment to run the job. |

If multi-core CPUs add complexity, they also open up new opportunities. One is to strategically manage and prioritize your computing demands using job schedulers. Another is to bundle your existing multi-core machines into a virtual cluster, ready to perform rendering, animation, analysis, and other heavy-duty tasks during off hours and weekends. These introduce what global engineering firm Altair and many others call desktop cycle harvesting, a way to reap more from your hardware investment.

HPC Management for All Sizes

PBS Works, Altair’s portable batch system software suite for distributed computing, can be used for setting up and managing high-performance computing (HPC) systems with as many as 120,000 cores, and as few as four to eight cores.

PBS Works used to be one behemoth application encompassing workload management, cluster setup, and cloud-computing access. It has been refashioned into three complementary components:

- PBS Professional (commercial grade HPC workload management)

- PBS Analytics (usage monitor, reports, and planning)

- PBS Catalyst (job submission application)

The drag-and-drop job management interface, PBS Catalyst, is available as a desktop client or as a browser-based web client. This client functions as a portal to tap into the processing power available in your local machine or your company’s internal network. If the internal computing capacity is not sufficient to process your jobs, you may choose to reach into Altair’s on-demand computing environment through the client. Altair offers PBS Catalyst as a free download for managing up to four local cores.

“We’re taking the technology that was once exclusive to large organizations and bringing it to the smaller ones that don’t have all the necessary expertise,” says Robert Walsh, Altair’s director of business development for PBS GridWorks products.

PBS Works consists of a server, a scheduler, and machine-oriented mini-servers (MOMs). The server creates, monitors, and tracks job batches. The scheduler houses policies (administrative rights, credentials, access granted, and others).

The MOMs (mothers of all execution jobs) monitor the execution nodes’ native resources (CPUs, disk, etc.) and custom resources (for instance, tagging a node with Altair’s Radioss FEA program will tell the scheduler to route Radioss jobs to that node). They also monitor jobs in progress and help clean up the nodes where jobs are running. This allows the next jobs to run on those nodes without competing with leftover job remnants.

Drag and Drop Job Scheduler

The tabulated interface of PBS Catalyst shows you a summary of jobs generated, along with a list of local and remote computing resources (HPC nodes) at your disposal. You may configure the client to query the backend systems at regular intervals for updated lists of resources and jobs. Through the client interface, you may point to the specific HPC system to use and the priority level it gets in the queue.

While the PBS Catalyst client lets you customize and manage your own local resources, you won’t be able to do the same with the backend distributed networks (the company’s internal network and the remote cloud network provided by Altair). They are, however, available for you to customize and submit your jobs to within the boundaries set by IT administrators.

“The reasoning behind this approach is, we’re giving engineers the ability to manage their own local resources, but the backend resources are shared resources managed by IT staff, so users won’t have the ability to do whatever they want to those systems,” explains Walsh. These configurations are left to network administrators and IT managers with the right credentials to edit them via PBS Professional.

If you run a certain job repeatedly, you may save the job setup with preferred parameters in your local profile. The next time you need to run the job, you can simply drag and drop it—with all your saved settings—into the appropriate queue.

Insight from History

The purpose of PBS Analytics is to let users monitor and study job queues, resources accessed, time requested, and other historic patterns to help develop enterprise-wide HPC strategies. Walsh says PBS Analytics might help you better answer the following: Are you short on licenses or computing cores? How many jobs are you running? Who’s running most of the failed jobs? Do they need additional training?

In the future, it may also play a role in PBS Works R&D team’s efforts to tackle one of the most challenging problems with distributed computing: estimating time required to complete jobs based on user history.

Open Architecture

Altair develops and markets its own computer-aided engineering (CAE) solution, HyperWorks, but PBS Works is designed with an open architecture to allow users to manage job scheduling with third-party software. “Even though I’m at a CAE company, I work with all the solvers from others, from Abaqus (from Dassault Systèmes) and MSC (Nastran, Patran, etc.) to ANSYS. They’re all our partners,” says Walsh.

FAQs for HPC Fielding daily inquiries from business prospects, Robert Walsh, Altair’s director of business development for the PBS GridWorks product line, has noticed certain issues tend to be on most HPC buyers’ mind. Here are a few, with the responses he usually gives. Question: If I want to set up my own private HPC system, do I need to acquire machines with the same type of CPUs? Or can I put together a system with a mix of CPUs? (For example, CPUs with varying number of cores and speed.) Q: Can PBS Works give me an estimated amount of time a certain job will take to complete based on the number of cores designated and the volume of data involved? Q: How do I get billed for using on-demand computing? Q: Can I use PBS Works to schedule works on GPU clusters? |

More Info:

PBS Works

Kenneth Wong writes about technology, its innovative use, and its implications. One of DE’s MCAD/PLM experts, he has written for technology magazines and writes DE’s Virtual Desktop blog at deskeng.com/virtual_desktop/. You can follow him on Twitter at KennethwongSF, or send e-mail to [email protected].

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE