Optimize Workstations for Faster Simulations

Nearly every workstation can benefit from upgrades and enhancements when it comes to maximizing performance for simulations.

December 1, 2012

By Frank J. Ohlhorst

The old adage “time is money” proves to be sage advice for anyone in the realm of engineering. Nowhere is this truer than with complex simulations, where reducing time to completion by a few hours, if not minutes, can have noticeable effects on the final outcome.

Take, for example, complex engineering scenarios such as flow simulations, molecular dynamics and weather modeling—each of which requires significant computational power to process a simulation in an acceptable amount of time. However, there is a vague factor here that needs to be addressed: What is an acceptable amount of time?

That is a question that is not always easily answered, yet there are some common-sense rules that could be applied. For example, a weather simulation that takes two days to process tomorrow’s weather would be far from acceptable.

Nevertheless, most workstations share a common set of challenges when it comes to maximizing performance for simulations. Those challenges are directly dictated by the hardware integrated and the software used for any given simulation. For most simulation chores, it all comes down to the performance of CPUs, GPUs, RAM and storage. Each of those elements has a dramatic effect of the efficiency of the workstation, and how well it performs in most any given situation. Let’s take a look at each of these elements and where maximum performance can be garnered.

CPUs Take the Lead

Arguably, the most important element of any workstation is the CPU, which has a direct impact on overall performance. In most cases, the CPU is also the most difficult component to upgrade, because the motherboard, BIOS and several other elements are designed to work with specific CPUs. What’s more, CPU upgrades tend to be expensive endeavors—especially when moving from one generation of a CPU to another. In most cases, it makes more financial sense to just purchase a new workstation, instead of dealing with the hassles of a CPU upgrade, which may deliver disappointing results.

There is, however, one exception to the upgrade rule here. Many workstations are designed to support multiple CPUs, but are usually ordered with only a single CPU socket occupied. In that case, additional CPUs can be added economically and still offer an impressive increase in performance.

Upgrading System Memory

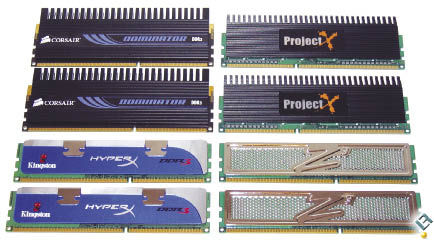

Adding RAM to a workstation has long been one of the easiest ways to increase performance. But in the realm of simulations, there may be some caveats to consider.

Take, for example, the base RAM already installed in a workstation. Most workstations come well equipped with RAM, meaning that adding more may not make much of a difference in performance. Other concerns include how much RAM the OS allows access to, and how much RAM the simulation application can effectively use.

For example, 32-bit operating systems, in most cases, are limited to accessing no more than 2.5GB of RAM. The same can be said of many 32-bit applications. In those cases, going beyond 2.5GB of RAM offers less return on performance. There are a few exceptions here, such as utilities that can access above that 2.5GB limit to create RAM drives or caches—but once again, the software has to support access to those options to make it a worthwhile investment.

|

| Adding more RAM is one of the easiest ways to increase performance. |

If you are not limited by software factors or bit levels, though, the rule becomes “the more RAM, the better.” Still, there are some limitations to be considered, and those center around system design and concerns around diminishing returns.

When choosing to add more RAM, the most important element to look at is how the OS and the simulation application will use that memory. The questions become “how much memory can the simulation consume?” and “how will the OS allocate the memory?” Ideally, the simulation software will use as much RAM as it is presented with, and leverages that RAM efficiently. The best place for that information comes directly from the software vendor.

One other consideration is RAM speed; some workstations can support different types of RAM, with different MHz ratings. For example, some systems may support 1,600 MHz DDR3 ECC dual in-line memory modules (DIMM), but have shipped with slower RAM, such as DDR3 1,333 MHz, allowing an upgrade to faster RAM for enhanced performance.

The true speed of RAM is dictated by motherboard design. Simply plugging in faster RAM does not equate to enhanced performance. Rather, the supporting hardware must be able to leverage the faster speeds.

Improve Disk Drive Performance with SSDs

Most workstations are designed to balance capacity against performance—at least when it comes to disk drive storage. Because engineering files and workloads can have massive file sizes, workstations are normally equipped with large-capacity hard drives, which by design may offer less performance than smaller-capacity drives.

Recent improvements in drive technology, namely in the form of solid-state drives (SSDs), have all but eliminated the size vs. performance barriers. SSDs come in large capacities and offer many benefits over traditional spindle-based hard drives.

SSDs can eliminate the bottleneck created by traditional platter-based drives, and thoroughly optimize system performance. High-performance workstations, especially those used for CAD, simulation or graphics design, are normally equipped with the highest-performance CPU that makes sense relative to budget.

Nevertheless, disk IO performance can be just as important in daily usage to reduce the amount of time that is required to complete a job. Because time is literally money for most workstation users, getting the current job finished and on to the next article of work is essential.

Traditionally, performance workstations have used small computer system interface (SCSI) drives with very high rotational speeds (15,000 rpm) to overcome the issue of disk IO latency. Combining the best-performing drives in a redundant array of independent disks (RAID) 1—or for more performance, a RAID 0 Stripe—creates an effective, albeit pricey solution to the performance challenges inherent with traditional mechanical drives.

SSDs are not hampered by mechanic latency, making that an irrelevant benchmark to show the performance differences between SSDs and traditional drives. Performance is better judged with IOs per second, and SSDs trump physical drives in that category.

That performance advantage is compounded even further when SSDs are utilized in a RAID. Generally, RAID 1 or 0 are the norm for workstation use, though in some cases RAID 5 or other configurations may be warranted.

SSDs adding capacity via RAID does not increase latency. If anything, performance is improved as more drives are added—thanks to striping and mirroring technologies that are normally performance-hobbled by physical drives, which do not suffer mechanical latency issues when SSDs are used.

As SSDs continue to fall in price, the technology makes sense for those looking to maximize simulation performance, where large disk writes and reads are the norm.

Graphics Cards

Nothing affects the visual performance of a simulation more than a graphics card. While a lot of number crunching and data movement goes on behind the scenes during a simulation, it is the visual representation of the simulation that has the lasting impact on the observer. Whether it is a simulation of flow, perspective or stress points isn’t really the important part here; it all comes down to how quickly, smoothly and accurate the representation of that simulated event is.

Workstation graphics cards aren’t exactly cheap, and prove to be a significant investment when upgrading a workstation. However, understanding the value of that investment is a little more complex—it is entirely possible to overinvest in graphics card technologies.

The trick is to correlate needed performance against overall graphics card capabilities, which can be determined with the help of the simulation software vendor. Most simulation software vendors offer recommendations on graphics hardware, and actually do a pretty good job of correlating simulation performance to a given hardware platform.

That said, with some experimentation and in-depth understanding of graphics cards or GPUs, users may be able to pick better performing products than what is suggested by the vendor. It all comes down to what to look for in a graphics card.

Once again, the simulation software used dictates the best choice. For example, software that depends upon 3D capabilities or other advanced imaging needs may dictate what type of card to choose. Luckily, there is a multitude on the market.

Simply put, a GPU manages how your computer graphics process and display. Thanks to parallel processing, it’s typically more efficient than a CPU on software that is designed to take advantage of it. The GPUs that are best optimized for professional graphics-intensive applications, such as design visualization and analysis, are found in workstation-caliber AMD FirePro and NVIDIA Quadro graphics cards.

Professional 2D cards can manage some 3D processing, but are not optimized for regular 3D applications. They generally aren’t well suited for engineering. For professional-level simulation work, a Quadro or FirePro 3D add-in card is probably a must. Each of these product lines includes approximately half-a-dozen models that fall into four product categories, such as entry-level, mid-range, high-end and ultra-high-end. (Desktop Engineering will review a number of graphics cards in an upcoming issue.)

There are always exceptions, but most buyers will want to match the performance and capabilities of the GPU with the rest of the system—that is, an entry-caliber card for an entry-caliber workstation. Achieving good balance, where each component hits a performance level that is supported by the rest of the system, is the best way to maximize ROI for your workstation purchase and optimize your productivity.

|

| Graphics cards can boost the speed of many types of simulation. |

One thing to remember is that GPUs are not solely for just graphics performance; there is a growing trend to use GPUs for general-purpose computing as well. General-purpose computing on GPUs (GPGPU) technology is still evolving, but many of the applications that show the most promise are the ones of most interest to engineers and other workstation users: computational fluid dynamics (CFD) and finite element analysis (FEA). Simulation software developers are porting code to harness GPUs to deliver speed increases. As you might expect, CPU manufacturers are not prepared to relinquish ground to GPGPUs. Intel, for instance, has released its Many Integrated Core (Intel MIC) architecture, which combines multiple cores on a single chip for highly parallel processing using common programming tools and methods.

Frank Ohlhorst is chief analyst and freelance writer at Ohlhorst.net. Send e-mail about this article to [email protected].

INFO

Subscribe to our FREE magazine, FREE email newsletters or both!

About the Author

Frank OhlhorstFrank Ohlhorst is chief analyst and freelance writer at Ohlhorst.net. Send e-mail about this article to [email protected].

Follow DE