Preconfigured HPC for Simulation

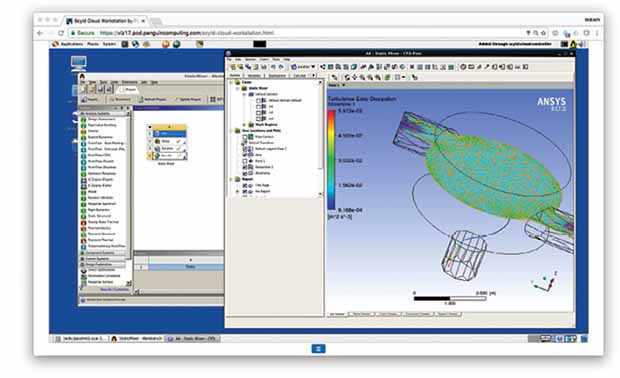

POD, or Penguin Computing on Demand, allows you to run simulation on Penguin Computing’s on-demand infrastructure. Image courtesy of ANSYS.

Latest News

April 4, 2017

Cloud-hosted technologies are generally easier to use and deploy. That, at any rate, is the expectation of the consumers. The precedents set by browser-based ERP (enterprise resource planning), PLM (product lifecycle management) and CRM (customer relationship management) offerings certainly reinforce this notion. This may give the wrong idea about do-it-yourself HPC (high-performance computing) for simulation.

“With AWS (Amazon Web Services), you’ll have to create a cluster that’s right for the job you want to perform. There are some tools for managing queues and the environment. But they can’t accommodate some interactive jobs, or people who want to use their own schedulers, which is different from what AWS provides,” says Kevin Kelly, sales director, Penguin Computing, Penguin on Demand (POD).

“It’s not just cloud-based HPC the customers are looking for,” says Wim Slagter, director, HPC & cloud marketing at ANSYS. “What they want is simple, intuitive job submission procedures, as well as compute and graphics nodes that they can scale up or down easily. If they have to develop the simulation environment themselves, they might just walk away. Doing that with job schedulers and storage setup on a public cloud server can easily take months.”

POD, or Penguin Computing on Demand, allows you to run simulation on Penguin Computing’s on-demand infrastructure. Image courtesy of ANSYS.

POD, or Penguin Computing on Demand, allows you to run simulation on Penguin Computing’s on-demand infrastructure. Image courtesy of ANSYS.“Configuring simulation or deep learning applications in the cloud is very complex,” says Tyler Smith, director of strategic partnerships at Rescale. “Optimizing an application on Azure, Google or AWS can take months. You need expertise in both HPC and the simulation use case. Many businesses don’t have that expertise in-house.”

Preconfigured HPC

Providers like Penguin Computing, Rescale and other HPC vendors hope to capture the simulation market by bundling backend hardware with frontend software, a combo designed to make HPC-powered simulation easier. By preconfiguring the hardware infrastructure to fit the clients’ simulation workflow and providing online tools, they eliminate the IT burden that would have otherwise fallen on the user.

“Our heritage is in building optimized, large HPC clusters. That’s the genesis of Penguin Computing,” says Kelly. “When we talk to customers about the application they want to run on HPC, often we find out it’s an application we already have, ready to run on POD [as a preloaded, preconfigured option]. With AWS or [Microsoft] Azure, you’ll have to put together an appropriate system for your application.”

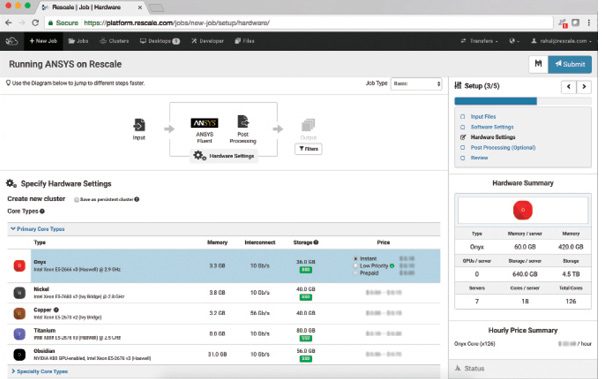

The cluster-configuration interface of Rescale’s on-demand simulation infrastructure.

The cluster-configuration interface of Rescale’s on-demand simulation infrastructure.Image courtesy of Rescale.

“Rescale’s offerings are extremely turnkey,” says Smith. “We’re not a consulting company. But with enterprise sales, we do act as advisors.” Rescale doesn’t own or operate its own data centers. The company provides on-demand hardware infrastructure in partnership with hardware vendors like AWS and Azure.

“Virtualized environments are not appropriate for many HPC workloads,” notes Kelly. “A bare metal environment, [like POD] runs optimally for many HPC applications. Besides, we meter in three-second increments. Some of the providers meter by the hour.”

If a simulation job takes five hours and three seconds, for example, you’ll be billed for six hours of processing time by a vendor who meters by the hour. Your bill for such a job in Penguin Computing’s billing method, as Kelly explains, will be strictly for five hours and three seconds.

Middleman for Licensing

Specialized HPC vendors also perform another function that general purpose cloud providers like AWS and Google don’t—sorting out the software licensing needs. HPC licensing for simulation is different from single-user or single-machine licensing. HPC licensing allows the software to run in parallel on dozens, hundreds or even thousands of cores for faster processing and efficiency. Figuring out the right licensing scheme or the most economic option for a particular HPC job could be a stumbling block.

“We have negotiated contracts and proactively figure out go-to market strategies with software vendors,” says Smith. “We’re pushing ISVs [independent software vendors] to implement on-demand licensing. We work closely with companies that haven’t [developed on-demand licensing]. At the end of the day, Rescale doesn’t resell software licenses. But we can facilitate [license acquisition].”

Penguin Computing’s Kelly says the company enables customers to leverage cloud licenses from their software vendors, or can implement workflows with secure access to on-premise license servers. “Many of our customers have great relationships with their ISVs and want to keep that direct relationship,” he says.

Without a licensing mechanism that allows multiple instances of the software to run simultaneously on hundreds of cores, engineers can’t perform what is called design of experiment (DOE), a type of simulation conducted to evaluate and analyze a sweep of design options to identify the best. Leading ISVs have begun to offer various forms of token-based or usage-based licensing options, but the variety of billing and calculation methods could be difficult to decipher for novices and ordinary users. A simpler pay-as-you-go license that makes it easy to predict the cost of a HPC-powered simulation job will go a long way to encourage more DOE analyses.

Cloud for Lease with Unlimited Software Use

In 2014, Altair, the company best known for its OptiStruct solver, launched HyperWorks Unlimited Virtual Appliance, a cloud-hosted simulation platform built on AWS infrastructure. The predecessor to the AWS-based version was Altair’s HyperWorks Unlimited Physical Appliance, a turnkey appliance that functions as an onsite HPC cluster for simulation.

“One size doesn’t fit all,” says Rob Walsh, VP of business development for cloud solutions, Altair. “We offer HyperWorks Unlimited Physical Appliance for people using it for [the] long term. It’s a great value for them. But for customers who need a much more dynamic setup, we offer HyperWorks Unlimited Virtual Appliance.”

One unique feature of Altair’s offering is the software bundled with HyperWorks Unlimited. Whether you get the AWS-based virtual appliance or the bare-metal physical appliance, the platform comes with “unlimited use of the HyperWorks computer-aided engineering (CAE) suite with PBS Professional, a leading HPC workload manager,” the company writes.

“If you get the cloud cheap, but you need to pay a lot more for ISV software licenses to run your job, that still inhibits what you can do with on-demand HPC,” Walsh says. “So the value we add [with unlimited software use] is significant.”

HyperWorks Unlimited is not sold, but is instead leased, and is therefore described as “a fully managed HPC appliance,” Walsh says. “We lease out HyperWorks Unlimited hardware on a yearly term. Either the solver or the hardware itself alone would have cost you the same amount, so it’s like getting the other for free.”

HPC-ISV Partnerships

Most simulation software vendors do not own and operate their own data centers—a prerequisite to support on-demand HPC. Many also lack the HPC expertise required to put together application-optimized clusters on the fly. Therefore, a partnership with an on-demand HPC vendor is the most practical approach.

Leading simulation software makers Siemens PLM Software, Dassault Systèmes and ANSYS, for instance, have a relationship with Rescale that enables users to run their software on Rescale’s infrastructure.

“We are ultimately a simulation software provider. That’s what we focus on. We’re good at that,” says Slagter. “Cloud-hosting partners are experts at remote hosting and cloud infrastructure—setting it up, building it and maintaining it. For the short term, ANSYS has no plan to become [an on-demand HPC provider].”

In mid-2015, ANSYS launched The ANSYS Enterprise Cloud, powered by the ANSYS Cloud Gateway and AWS. “The global backbone of public cloud providers like AWS and Azure with cloud data centers around the globe has great appeal to many of our customers,” explains Slagter. “That’s why we’ve developed an Enterprise Cloud solution that is engineered to enable end-to-end simulation to be performed entirely in the public cloud. While our solution provides a complete virtual simulation data center, HPC resources are still at the heart of the system. We have partnered with Cycle Computing, a company that specializes in enabling HPC workloads on public cloud infrastructure, and it is their CycleCloud software that powers the auto-scaling clusters that deliver HPC on demand.”

Hybrid for the Near Future

A startup launching today may have the option to delegate all or most of its IT needs to the cloud. But many midsize and large enterprises that began life in the pre-cloud era didn’t have that luxury. So the majority of them now own, operate and maintain private clusters. Forsaking the hardware they already own for the convenience of the cloud is still a difficult proposition.

“The average lifespan of a cluster is three to five years,” notes Smith. “If you have spent hundreds of thousands of dollars on a cluster, you’re not going to abandon them. You’ll figure out a way to use them.” That is the reason many industry insiders are counting on the hybrid model—onsite clusters augmented with on-demand resources—to persist for the near future.

“Rescale sees opportunity in the hybrid model for the near term,” says Smith. “Currently, many businesses are using on-premise hardware, but that is shifting. The migration can be pretty challenging. We can augment your on-premise resource and make the migration seamless.”

More info:

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE