Recent Progress in CAE Cloud Computing

Latest News

December 20, 2016

Commentary by Wolfgang Gentzsch and Burak Yenier

The 2016 Compendium of engineering cloud cases studies can be downloaded here.

The 2016 Compendium of engineering cloud cases studies can be downloaded here.Fifty computer-aided engineering (CAE) cloud experiments per year, 20 engineering simulation case studies in one compendium each year since 2013, that’s the harvest of four years of UberCloud CAE cloud experiments. The objectives of the UberCloud Experiment are 1) creating a community of CAE in the cloud supporters, 2) better understanding cloud computing roadblocks, and of 3) identifying solutions to be able to resolve those roadblocks.

With 195 examples of CAE on the cloud, it’s possible to measure the progress of advanced simulation in the cloud over the last four years. Out of the first 50 cloud experiments the first year, 26 failed and the average duration of such an experiment was three months. Four years later, and not one of the 50 most recent cloud experiments failed; plus the average duration of an experiment is now just three days. That includes preparing and accessing the engineering application software in the cloud, running the simulation jobs, evaluating the data via remote visualization, transferring the data back on premise and writing the case study. Selected case studies then are published in an annual Compendium.

Resolving Cloud Roadblocks

One reason for this progress is that most of the cloud roadblocks from four years ago have disappeared, one after the other.

Security was the No. 1 roadblock in 2012 when speculations about cloud datacenter security mostly replaced arguments. Today, security is greatly a mental concern. Security experts widely agree that cloud datacenters are at least as secure as any other well-guarded datacenter on Earth.

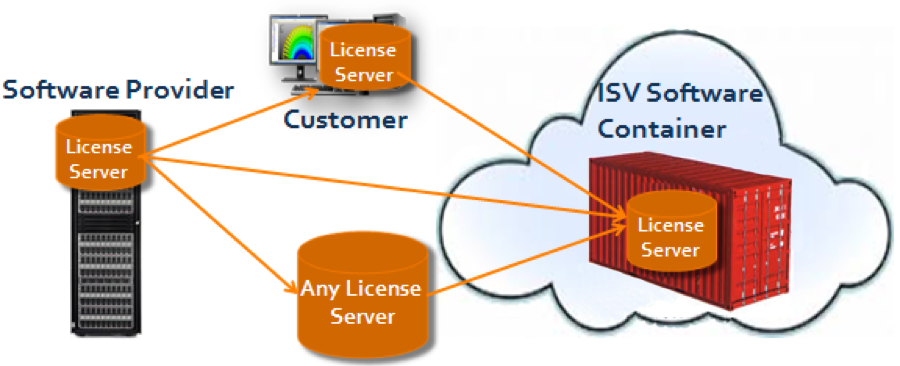

Next was software licensing. For many years, software vendors resisted more flexible and short-term licensing models because they were concerned that cloud business models would disrupt their proven annual perpetual licensing. Only when they understood that a correctly implemented cloud model could be complementary to their traditional licensing model were they ready to start experimenting. Today, most forward-looking software providers have (or are currently developing) their own cloud licensing model.

Resolving cloud licensing: wherever the software license resides it can be transferred to the cloud.

Resolving cloud licensing: wherever the software license resides it can be transferred to the cloud.Another major cloud roadblock a few years ago was the belief that—unlike enterprise software—engineering application software was not well suited for cloud; it had to be ‘cloud-enabled’ first. In fact, the solution came from the bottom-up via hardware. More and more cloud providers added scalable high performance computing (HPC) to their server mix, with the fastest CPUs, Infiniband interconnects and GPU (graphics processing unit) acceleration.

Among the major roadblocks in 2012 was also the cost of cloud. There was some confusion in the market by comparing apples to oranges, such as comparing Amazon $10 AWS instances that are well-suited to typical CAE applications at small- and medium-size businesses to million-dollar supercomputers at national HPC centers. Those distortive comparisons could have damaged the reputation of early cloud providers, especially in the SMB market. But now there is clarity again because it’s easy to get cost information from any cloud provider, and it is well-known that on-premise hardware comes with a high markup when total cost of ownership is considered.

Finally, in the early days of cloud computing, users complained that they got lost in the complexity of accessing and using cloud resources, and once using one cloud they were not able to easily use another cloud when needed. With the advent of software container technology for CAE and other applications this complexity disappeared. Software containers bundle OS, libraries and tools, as well as application codes and even engineering and scientific workflows. Today it is easily possible to access your application and data through your browser in any cloud the same way you access your desktop workstation.

Taking all this into account, a healthy mix of in-house and remote cloud computing can often be recommended, with cloud complementing on-premise resources, and adding benefits like seamless and interactive access and use, flexibility, business agility, resource reliability, dynamic up-and-down resource scaling according to need, pay-per-use, and ever-growing never-aging computing resources to the mix.

This commentary is the opinion of Wolfgang Gentzsch, president and co-founder of The UberCloud; and Burak Yenier CEO and co-founder of UberCloud. For more information visit theubercloud.com.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News