The HPC Revolution

Top tech companies push for HPC-driven engineering.

Latest News

November 1, 2010

By Kenneth Wong

Some might consider what Microsoft is proposing at its technical computing portal as playing God in pixels. Others might view it as technology imitating life. Modeling the World, launched in May, showcases Microsoft’s initiative to enable scientists, engineers, and researchers to digitally reproduce many of the complex bio-eco-mechanical systems that affect our lives—weather patterns, traffic flows, disease spread, to name but a few—so they can be used for predictions. What might trigger the next financial collapse? Where will the next deadly cyclone make landfall? What’s the best launch time for NASA’s next space mission?

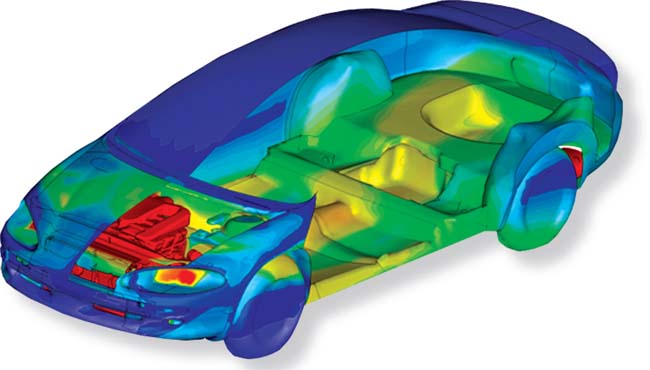

ANSYS adds GPU support, allowing you to run ANSYS Mechanical on GPU-accelerated HPC clusters. |

In his personal note posted to the site, Bob Muglia, president of Microsoft Server and Tools Business, writes, “Our goal is to unleash the power of pervasive, accurate, real-time modeling to help people and organizations achieve their objectives and realize their potential … One day soon, complicated tasks like building a sophisticated computer model that would typically take a team of advanced software programmers months to build and days to run, will be accomplished in a single afternoon by a scientist, engineer or analyst working at the PC on their desktop.”

It’s not a coincidence that the site went live in May, a few months before the unveiling of Microsoft HPC Server 2008 R2, the company’s software for supercomputing. Modeling the world, the company believes, will require:

- pushing technical computing to the cloud.

- simplifying parallel development.

- new technical computing tools and applications.

HPC in the Cloud

For most designers and engineers, modeling the world may be confined to rendering, simulation, and analysis. The latest generation of workstations equipped with multicore CPUs and GPUs can easily churn out photorealistic automotive models and architectural scenes produced in a high-end 3D modeling software within a few seconds or minutes, but in the near future, you may be able to take advantage of remote HPC systems through a browser to speed up the process close to real-time.

At the GPU Technology Conference 2010, NVIDIA cofounder, president, and CEO Jen-Hsun Huang was joined by Ken Pimentel, director of visual communication solutions, media and entertainment, Autodesk; and Michael Kaplan, VP of strategic development, mental images.

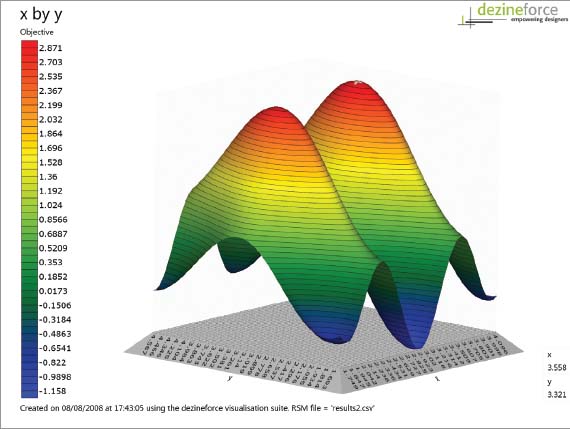

Dezineforce delivers computing resources on demand, giving subscribers remote access to HPC systems for running popular analysis and simulation programs. |

Pimental and Kaplan were there to break the news about an online rendering option, slated to become available to 3ds Max users in the near future (no definitive date yet). Powered by iray rendering engine from mental images (a wholly owned subsidiary of the GPU leader NVIDIA), the new feature lets you create photorealistic renderings from a browser window, tapping into the computing horsepower of a GPU cluster hosted offsite.

Since you’ll be relying on the remote HPC cluster to produce the rendering, you may choose to use a standard laptop to give client presentations, provided you have uploaded your scene data to a remote server accessible via Internet protocols. Should your client require a change (for example, switching from one wall texture to another), you could execute the change on the spot and update the rendering with nearly real-time speed. This is not confined to creating still images. If you move around the scene, your perspective changes too will update with the same speed.

What Would You Do if You Have HPC? Whereas single-core computing usually confines you to simulating one design scenario at a time, you may be able to run several variations of the same scenario simultaneously using the multicore/many core computing paradigm. This lets you simulate, for example, airflow patterns around a racecar’s hood, designed with several different curvatures, all at the same time. For small businesses that may not be ready to invest in HPC hardware, cloud-hosted HPC offers a viable alternative, but enabling the function is much easier if fully incorporated into the design, rendering, or analysis software itself. In computer-aided machining (CAM) and tooling, software developers like CoreTech (makers of Moldex3d) and HSMWorks (makers of HSMWorks, a SolidWorks CAM plug-in) have built HPC-support into the software interface itself. Autodesk has a number of technology previews currently hosted at Autodesk Labs, a portal where the company showcases its technologies still in development. Project Centaur, a web-hosted design optimization technology, lets you specify materials, constraints (for example, holes A and B are connected to the rest of the assembly via pin joints), load conditions, variable parameters, then find the best design option through computation. Similarly, Project Neon let you upload an AutoCAD file with camera angles and saved views, then use remote computing resources to render the scene. Since technologies in Autodesk Labs are not yet part of commercial products, it’s unclear how the company plans to license these features or charge for accessing them. But they serve as working prototypes of how you will be able to take advantage of remote HPC in the future. |

“]Cloud-hosted GPUs] are all running exactly the same iray software that comes with 3ds Max,” says Kaplan. “We can guarantee that the image that you get from ]cloud-hosted iray renderer] is exactly the same, pixel for pixel, as what you would get from 3ds Max.”

For those who routinely perform computational fluid dynamics (CFD), finite element analysis, and other engineering simulations, a similar web-hosted option is available from Dezineforce, a UK-headquartered company. “Dezineforce provides HPC-based engineering simulation solutions as pre-integrated, engineer-ready products,” the company explains.

Dezineforce gives you access to analysis software from well-known vendors, including ANSYS, MSC Software, Oasys, and Dassault Systemes. You can buy monthly subscription packages, essentially paying for the number of CPU core hours used on Dezineforce’s HPC platform. Prices start from £5/$7.50 per core hour, excluding the analysis software license cost. This alleviates the cost of purchasing and maintaining a proprietary HPC system.

Modeled on SaaS or software as a service, the new HaaS or hardware as a service model is catching on among other engineering software makers. Altair, for instance, complements its PBS Works software suite with on-demand HPC services. If your in-house computing resources are inadequate for your needs, you may remotely tap into Altair’s HPC resources, then pay for your usage per hour, per node.

According to Microsoft, the company will soon release an update to Windows HPC Server that allows customers to provision and manage HPC nodes in its Windows Azure cloud platform from within on-premises server clusters, in order to utilize computing power on-demand.

HPC in a Box

On-demand HPC or cloud-hosted HPC is a viable option for those who need it periodically. For those who must perform intense computation tasks regularly, HPC in a box may be a better option. Dezineforce, the same company that offers web-hosted analysis solutions, also sells HPC in a box.

Measuring roughly 28x30x47 in., Dezineforce’s HPC Simulation Appliance is powered by Intel Xeon X5670 processors, configurable in 48 to 384 GB of memory; 0.5 to 10 TB of storage; and 24, 48, 96, or 192 cores. The appliance is designed for running HPC-based simulations with software from ANSYS, CD-Adapco, Dassault Systemes, MSC Software, and Ricardo, among others.

For years, GPU has been relegated to the realm of digital content creation, game development, and filmmaking. But in the last couple of years, NVIDIA began moving into biomedical imaging, financial analysis, and engineering simulation—typically considered HPC territories. The releases of several new GPU-based HPC systems (IBM BladeCenter, T-Platforms TB2, and Cray XE6), all timed to coincide with GPU Technology Conference, showed NVIDIA’s Tesla cards are making inroads in HPC.

“The combination of Cray’s new Gemini system interconnect featured in the Cray XE6 system paired with NVIDIA GPUs will give Cray XE6 customers a powerful combination of scalability and production-quality GPU-based HPC in a single system,” announced Cray.

NVIDIA’s foray into HPC is partly made possible by the launch of CUDA, the company’s programmable environment for parallel processing on GPUs. Much in the same way you can take advantage of multicore CPUs for parallel processing, you can now break down a large computational operation into smaller chunks, executable on many GPU cores. This opens doors to GPU-driven HPC applications, ranging from financial market analysis and fluid dynamics to ray-traced rendering.

A Hybrid Processor

As the competition between CPU and GPU heats up, AMD, which provides both types of processors, is planning on a hybrid model, an APU (accelerated processor unit) that combines both a CPU and a GPU on a single die.

“What we don’t see so much is an entire application running on one architecture or the other ]on CPU or GPU],” explains Patricia Harrell, AMD’s director of HPC. “An HPC application most likely will make use of both multi-core CPUs and GPUs.”

AMD plans to release Fusion brand APUs initially into the desktop and laptop market before migrating them into the server market. At the present, the APU is still in development. (The product has a dedicated home page at AMD site, but it greets you with, “Coming soon!”) The company currently offers its Opteron CPUs and FirePro GPUs for original equipment makers (OEMs) that want to build HPC systems using AMD processors.

Core-Based Licensing Falls Apart with GPU

The emergence of GPU-driven HPC systems in the market may also persuade high-end simulation and analysis software developers to re-examine their licensing model, traditionally tied to the number of cores.

In late 2010, ANSYS plans to add GPU-powered acceleration to its product line, beginning with ANSYS Mechanical R13. “Performance benchmarks demonstrate that using the latest NVIDIA Tesla GPUs in conjunction with a quad-core processor can cut overall turnaround time in half on typical workloads, when compared to running solely on the quad-core processor. GPUs contain hundreds of cores and high potential for computational throughput, which can be leveraged to deliver significant speedups,” according to a statement from ANSYS.

The hundreds of computing cores embedded in a single GPU (as opposed to CPU cores, which currently come in 2 to 32 cores in a single machine) make it impractical to license GPU-acceleration on a per-core basis. “The number of cores is so much higher on a GPU than on a traditional processor—and the performance per core is so different from traditional processors—that it calls for a different approach on the GPU (e.g., the core-based licensing makes no sense on a GPU),” says Barbara Hutchings, ANSYS’ director of strategic partnership. “To support our new capability, we will be making the GPU available if you have purchased our HPC Pack license. That is, the customer can buy a single license that enables both traditional processor cores and the GPU. This allows customers to choose the hardware configuration that makes sense for their application, without licensing being a barrier to the use of GPU acceleration.”

Similarly, Altair also offers its PBS Works software suite for both CPU and GPU. Robert Walsh, Altair’s director of business development for PBS GridWorks, says, “Today we don’t even charge you for GPU management. So, for example, you have a machine with a quad-core CPU and a 128-core GPU, you’re only paying for using the licenses of PBS Works you run on the CPU.”

Some software users feel handicapped by the traditional per-core licensing model, which limits them to run the analysis on a finite number of cores even though they may have invested in hardware with far greater number of cores. “Many simulation vendors are now offering highly scalable licensing,” notes ANSYS’ Hutchings, to point out that software makers are adapting.

| Today’s Netbook is Yesterday’s HPC By 1970s’ standards, an average desktop or notebook computer available from Best Buy or Fry’s today would be a supercomputer. The computing titan of the time, Cray 1, was installed at Los Alamos National Laboratory in 1976 for $8.8 million. It boasted a speed of 80 MHz, executing 160 million floating-point operations per second (160 megaflops) in 8 MB memory. By contrast, today’s HP Mini 110 (a netbook) in the most basic configuration is powered by a 1.66 GHz Intel Atom processor, with 1 GB memory. The price is roughly $275. |

In general, the more computing cores are involved, the faster the analysis can be completed. But the speed gain is not always equal to the increased core count, thus adding another layer of complexity to hardware purchasing decisions. (For example, increasing HPC hardware setup from 8 to 16 cores does not necessarily cut the computing time in half. Results vary from application to application.)

Data Deluge

With nearly every consumer and company creating content and sharing them through public forums and social media, the democratization of technology seems to have produced an unforeseen side effect: what The Economist calls “The Data Deluge” (Feb. 27- March 5, 2010). Citing the article during the “Pushing through the Inflection Point with Technical Computing,” keynote address at the High Performance Computing Conference on Sept. 20, 2010, Bill Hilf, Microsoft’s general manager of technical computing, said: “We’re outpacing our ability to store data. We’re actually creating more bytes than we have the capacity to store. The challenge really is, what do you keep, what do you throw away? Many analysts predict that over the next five years, we’ll produce more data than we have ever produced in the history of human kind.”

To analyze, understand, and extract precious nuggets of wisdom from the sea of data, Microsoft expects many will turn to parallel processing and HPC. “How do you make developing parallel applications and debugging them easier? How do you make it graphically visible so you can understand the performance of the application?” asked Hilf rhetorically. He hopes developers will turn to Microsoft Visual Studio, an integrated software development platform, to identify and take advantage of parallelization opportunities.

HPC is beyond Microsoft’s traditional territory, operating systems. To navigate these uncharted waters, the company needs to bring hardware developers onboard. A Microsoft press statement explained, “To bolster Visual Studio 2010’s parallel development capabilities, Microsoft works closely with Intel and NVIDIA development suites to better debug, analyze, and optimize parallel code—Visual Studio provides parallel debugging and profiling tools.

“Both NVIDIA Parallel Nsight 1.5 and Intel Parallel Studio 2011 make Visual Studio an integrated development environment for parallelism. NVIDIA provides source code for debugging GPU applications along with tracing and profiling for both CPU and GPU, all on a single correlated timeline. Intel Parallel Studio adds several tools to improve parallel correctness checking, race condition detection, and parallel code analysis and tuning.”

Kenneth Wong has been a regular contributor to the CAD industry press since 2000, first an editor, later as a columnist and freelance writer for various publications. During his nine-year tenure, he has closely followed the migration from 2D to 3D, the growth of PLM (product lifecycle management), and the impact of globalization on manufacturing. He is Desktop Engineering’s resident blogger. He’s afraid of PowerPoint presentations that contain more than 20 slides. Email him at Kennethwongsf ]at] earthlink.net or follow him on Twitter ]at] KennethwongSF.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE