Latest News

July 21, 2014

The growing popularity of 3D printing among the hobbyists as well as professional designers suggests a comparable rise in the use of reality-capture devices—hardware that lets you scan and capture the shape and geometry of physical objects. With 3D printers, Microsoft is betting the consumer models will pave the way for costlier, bigger professional models. (For more, read “Microsoft Adding Plut-and-Play 3D Printing to Windows OS,” May 7, 2014.) There’s good reason to make a similar assumption about 3D scanners as well.

Priced $399, the Cubify Sense seems ready to capture not just geometry but also the attention of early adopters and curious tech users. Measuring roughly 7 x 5 x 1 inches, the 3D scanner is smaller and lighter than a typical hardcover book. The device has no independent power source. It operates through a USB connection to a tablet or computer. The computer is also required for downloading the Sense software to activate and drive the device. Since whatever you want to scan may not be located close to your desktop, a laptop or a mobile tablet you can carry around is the best option for operating the scanner.

The scanner works by emitting laser beams to detect and determine the object’s surfaces and features. Since the scanner is handheld, to capture an object from all angles, you need to gently move around the target object and scan it from top to bottom, then from one side to another. The most compute-intensive part of the operation, it turns out, is when the software is using algorithms to assemble the point-cloud data acquired of the same target from different angles. So if you have a professional workstation, you may encounter no glitches. However, those with consumer-level machines may run into hiccups, resulting in the error message “Lost Track of Object.”

Once you lost track of the object, you may attempt to realign the scanner to the object by repositioning the scanner. However, in my tests, I found it’s not easy to realign once the scanner has lost track of the target. It’s much simpler to restart the scan altogether. Since whatever the scanner picks up is displayed on the tablet or the computer’s screen, I quickly learned I needed to position the laptop screen to face me so I could monitor the progress while I was scanning.

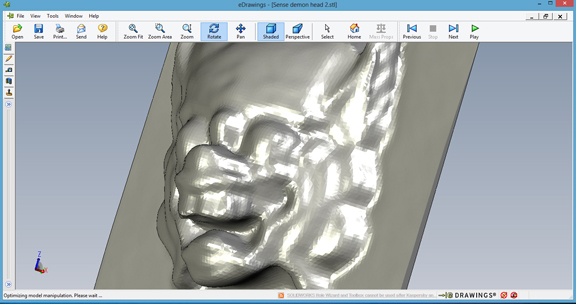

It’s inevitable that the scan data would have a few missing slices and gaps—a consequence of the imprecise hand movements. The software’s Solidify function can effectively patch up those flaws to produce a complete polygon model, ready to be saved for editing or for 3D printing. The resulting 3D model may be saved as OBJ or STL, both easily readable in standard CAD programs.

The Sense scanner is lightweight and easy to use. The device could be improved if, in future versions, it’s designed to operate independently, allowing the user to take it outdoor without carrying a tablet or a computer. But doing so would require redesigning the scanner with a battery, memory card, and built-in display—factors that might increase the cost beyond what enthusiasts and hobbyists would willingly pay.

In the reality-capture market, scanners like Sense are competing with two types of technologies. The first is photogrammetry software products like Autodesk 123D Catch. They let you upload a collection of digital photos of the same target object taken from various angles to construct a 3D model. One challenge associated with the photo-based approach is the need to have well-defined profiles and colors in the target object. Shiny, reflective objects (like my shaved head, as I discovered) could be problematic for the software. On the other hand, the handheld Sense works best when it’s scanning objects placed against plain backgrounds. When dealing with “noisy” backgrounds, the software must work harder to isolate the target object’s profile and colors.

The other competition for Sense may be a few years away. Major hardware makers may begin integrating depth sensors into their laptops and desktops in the future. In other words, there’s good reason to believe the computers and tablets you purchase two years from now may all come with depth cameras, capable of capturing you surrounding in 3D. If that becomes the norm, the market for plug-and-play scanners may become smaller.

For my video report on Sense, watch the video report below:

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE