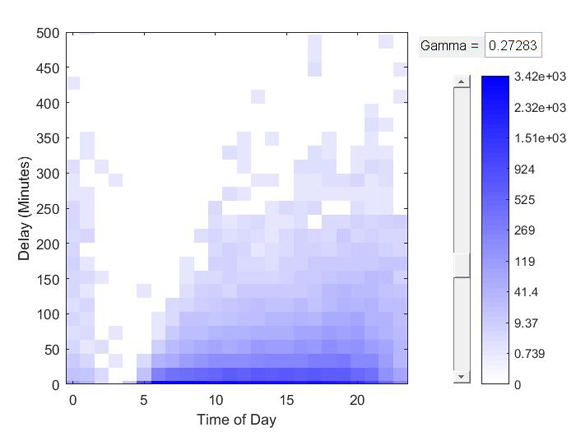

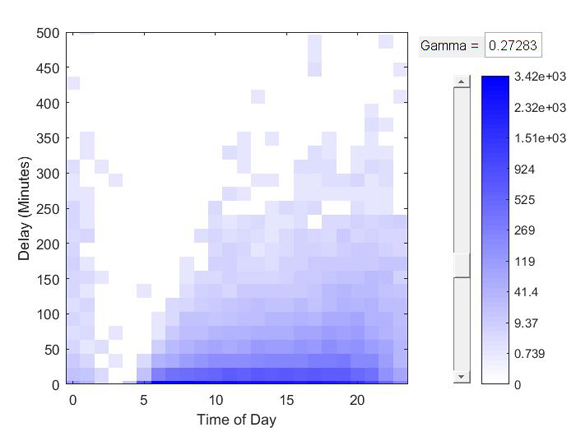

MatWorks releases MATLAB R2016b with support for tall arrays or tall data in many of its functions. (Image courtesy of MathWorks)

Latest News

September 26, 2016

MatWorks releases MATLAB R2016b with support for tall arrays or tall data in many of its functions. (Image courtesy of MathWorks)

MatWorks releases MATLAB R2016b with support for tall arrays or tall data in many of its functions. (Image courtesy of MathWorks)What makes the data tall? Dave Oswill, MathWorks’ product marketing manager, said, “Think about sensor data collected from vehicles. Each piece of data has a time stamp. That’s a good example of what we call tall data, or tall array.”

For MathWorks, tall array is typically columnar data with many rows, mathematical or statistical in nature, and too big to fit into computer memory. The company anticipates this type of data is going to be one of the big headaches for Big Data issues. Consequently, the company decided to bolster its tall data processing functions in the latest release of MATLAB, Release 2016b (R2016b).

The latest version includes easier ways to access the data, data munging functions (to organize and prepare them for automated analysis), and machine learning tools (to sift through the data and find insights using sophisticated algorithms).

In the announcement, MathWorks writes, “Tall arrays now provide a way to work naturally with out-of-memory data using familiar MATLAB functions and syntax, removing the need to learn big data programming. Engineers and scientists can use tall arrays with hundreds of math, statistics, and machine learning algorithms. Code can run on Hadoop clusters or be integrated directly into Spark applications.”

Oswill observed, “Because tall arrays are becoming so important that we see organizations moving them into SQL databases, NoSQL databases, and the likes of Hadoop distributed file systems. The data is usually too big to load into a machine’s memory, so we give you a way to work with that wherever they may be located.”

Paul Pilotte, MathWorks’ technical marketing manager, said, “Often, if you’re trying to analyze failures, like something that happened to the engine of an off-highway vehicle, you need to compare lots of sensor data from the field representing normal engine operations, from different models, with different engines. You might start doing some exploratory work with a smaller sample set that you can load easily. But if you want to confirm your theory or build some predictive models [to foretell pending failures], you’d need to look at, say, years of data. That could be tens of hundreds of GBs.”

Without the ability to work with large data sets, analysts would need to strip the data down to the most essential segments so it can fit into the processing machine’s memory. MATLAB R2016b is expected to facilitate working directly with large data sets instead, providing a means to rely on a larger sample pool for statistical analysis and deductions.

MathWorks has done the development work needed to handle tall data in smaller segments, process them, then aggregate the results. Pilotte explained, “When working with large data sets, it’s important to make sure you’re looping through the data the fewest number of times. Otherwise, the data access and looping operations could consume a lot of time and computation. MATLAB uses data queuing and paging, smart ways of accessing different data segments, and delayed processing to be much more efficient. We hide them so the users don’t see these.”

Some machine learning algorithms, like analyzing raw image files, have shown to benefit significantly from GPU’s parallel processing architecture. MATLAB supports GPU acceleration through its Parallel Computing Toolbox.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE