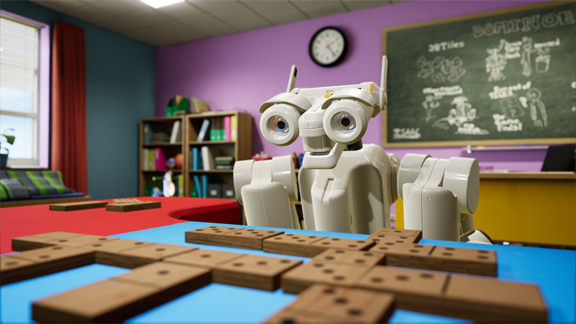

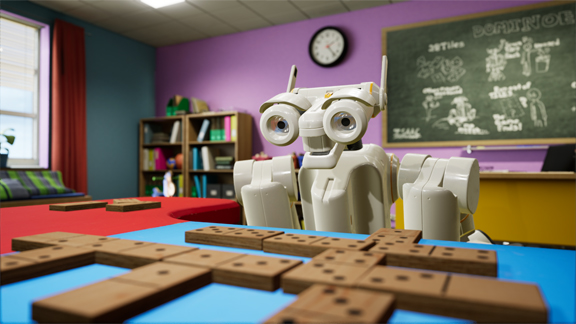

Virtual robot Isaac was trained using machine learning to play Dominoes (image courtesy of NVIDIA).

Latest News

August 2, 2017

If your primary computing device is a thin and light notebook, it’s highly unlikely your machine comes with a professional GPU. In such devices, stringent power and size constraints often leave no room for a workstation-class GPU. The graphics boost that comes from a CPU with integrated graphics or a mobile GPU may be adequate for entry-level CAD work and content creation, but underpowered for compute-intense rendering, simulation, and animation workflows. It’s the void that GPU maker NVIDIA has decided to go after.

External Quadro GPU

At SIGGRAPH 2017, NVIDIA launches external Quadro GPUs, offered through a select group of partners (image courtesy of NVIDIA).

At SIGGRAPH 2017, NVIDIA launches external Quadro GPUs, offered through a select group of partners (image courtesy of NVIDIA).NVIDIA has just launched external Quadro GPU (eGPU) solutions. Much like external media players and storage discs, the external Quadro GPU can be connected to a computing device via a Thunderbolt port. The bundled solutions (featuring an eGPU chassis and a GPU) will initially come from Magma, Sonnet, AKiTiO, and Bizon—vendors who currently offers external GPU solutions.

“For those who have invested in thin and light notebooks, this is an easy way to get the power of NVIDIA’s most capable professional GPU. In doing so, you instantly supercharge your prosumer or consumer device to work with larger, more complex 3D models, run programs with interactive ray-tracing, run simulation, even create VR content,” said Sandeep Gupte, director of professional visualization.

Prices will likely range from $300 to $700, depending on the chassis and GPU combo selected, Gupta estimated. “We want to get this solution to the market as quickly as possible. Magma, Sonnet, AKiTiO, and Bizon already have eGPU (external GPU) chassis that they’re offering. So it makes sense for us to partner with them,” he added.

Though Thunderbold connection is the basic requirement, other factors may decide whether a machine can take advantage of the NVIDIA eGPU solution or not. NVIDIA and its partners plan to publish a list of requirements to help consumers to find the appropriate eGPU for their existing machines.

Announced at SIGGRAPH 2017, the launch marks the first time NVIDIA Quadro has been made available as an eGPU. On the show floor, NVIDIA demonstrated the use of eGPU to run SOLIDWORKS Visualize, a CAD-integrated rendering environment running on NVIDIA Iray, on lightweight notebook PCs.

Waiting to Touch Something in the Holodeck

In May, at its annual GPU Technology Conference, NVIDIA revealed its VR-powered design collaboration system, dubbed the Holodeck. At SIGGRAPH, NVIDIA demonstrated the Holodeck’s potentials by bringing its virtual robot Isaac into the VR environment. On the showfloor, the real Isaac—that is, the physical robot, not the digital twin—entertained attendees by playing Dominoes with them.

The robot’s Dominoes-playing skills are the result of virtual training—applying machine learning to teach virtual Isaac the rules and the objectives of the game.

“This is the intersection of AI, VR, and robotics,” said Gupte.

The advantage of virtual robot training is the ability to speed up machine learning. Suppose it takes the physical robot Isaac a minute to pick up a ball. If it takes 600 tries to train Isaac to automatically recognize and pick up the ball, the training session will take you at least 100 hours. With virtual Isaac, you can add more computing power to create many instances of Isaac, thereby reducing the 100 hours for machine learning to a few hours only.

At GTC, NVIDIA suggested that the Holodeck would feature tactile feedback, among other things.

“The Holodeck is a sibling to the NVIDIA VR Funhouse [a mod kit for game makers],” said David Weinstein, director of professional VR. “They both run on the same underlying technology, which incorporates GameWorks, VRWorks and DesignWorks SDKs. VR Funhouse can generate force feedback using the NVIDIA PhysX engine [part of GameWorks]. So the same function is available for implementing tactile feedback in the Holodeck.”

NVIDIA certainly plans to develop applications that run the Holodeck, but some applications may come from third-party developers and NVIDIA partners, according to Weinstein. The Holodeck itself can calculate and issue feedback (for example, when a user’s avatar collides with a digital solid). But the app developer also needs to incorporate tactile-capable apparatuses (for example, haptic gloves or body suite) to let the users feel such forces.

The Holodeck was open to SIGGRAPH attendees who wanted to experience it. The version shown at SIGGRAPH doesn’t offer haptic feedback.

Virtual robot Isaac was trained using machine learning to play Dominoes (image courtesy of NVIDIA).

Virtual robot Isaac was trained using machine learning to play Dominoes (image courtesy of NVIDIA). NVIDIA Research shows the use of AI-driven technology to process noise in rendering (image courtesy of NVIDIA).

NVIDIA Research shows the use of AI-driven technology to process noise in rendering (image courtesy of NVIDIA).AI-Accelerated Rendering

In the last several years, NVIDIA has been devoting significant energy and R&D resources to exploiting AI-based workflows, expected to be an area of growth in GPU computing. The company has already launched a few products targeting this market. The DGX Station, billed as “a personal supercomputer for leading-edge AI development,” is on the market, for AI pioneers who need server-class compute power in a small form factor.

This year, at SIGGRAPH, NVIDIA demonstrated the use of AI to speed up rendering. It does so by using predictive algorithms to speed up the noise reduction. Usually, ray-traced rendering is compute-intensive because of the need to correctly calculate and depict light reflections. An a result, whenever a user reposition a scene, a character, or a model, the calculation has to be initiated, producing “noise”—clusters of unresolved pixels.

NVIDIA’s AI-driven denoising algorithm is trained on a stack of images to be able to predict light paths. It’s the work of NVIDIA Research, the division that studies groundbreaking technologies involving GPU computing.

Once a show devoted to computer graphics, SIGGRAPH is also in a state of transition. This year, the discussions on the showfloor and the exhibit booths are dominated by machine, AI, and inventive use of robotics. NVIDIA’s new focus is a reflection of the same shift, the outcome of graphics hardware and software’s penetration into scientific research.

Subscribe to our FREE magazine, FREE email newsletters or both!

Latest News

About the Author

Kenneth Wong is Digital Engineering’s resident blogger and senior editor. Email him at [email protected] or share your thoughts on this article at digitaleng.news/facebook.

Follow DE