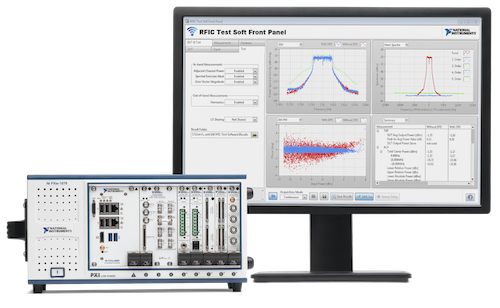

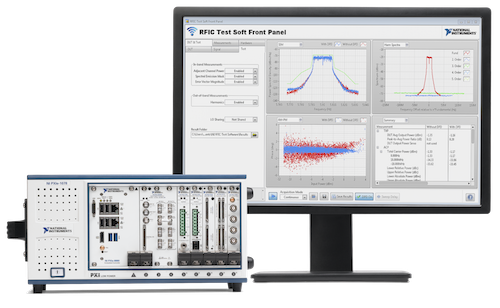

Organizations are transitioning from rack-and-stack box instruments and closed-architecture automated test equipment (ATE) systems to smarter test systems. Image Courtesy of National Instruments

January 19, 2017

Along with its impact on everything from design to upkeep and maintenance, the Internet of Things (IoT) promises to turn the relatively staid area of test and measurement on its head as companies retool their strategies for the era of smart products.

In its 10th anniversary edition of the Automated Test Outlook, National Instruments outlines what it sees as the biggest trends affecting test and measurement going forward. One of the biggest changes is rethinking testing strategies to map with the increasing number of software- and sensor-rich products, as well as taking a modular approach to ensure teams are always working with the latest protocols and technologies, according to Adam Foster, NI’s senior product marketing manager for test systems.

“You need to have a smarter approach to testing if you want to test a smart product and a lot of times, people view that as two separate things,” he explains. “People have to be thinking about the test system in the same way as a smart connected device: It has to be built to be flexible, it needs to stick around for a while, and it can’t sacrifice functionality.”

Organizations are transitioning from rack-and-stack box instruments and closed-architecture automated test equipment (ATE) systems to smarter test systems. Image Courtesy of National Instruments

Organizations are transitioning from rack-and-stack box instruments and closed-architecture automated test equipment (ATE) systems to smarter test systems. Image Courtesy of National InstrumentsWhat makes a test system smart? NI’s says flexibility and software-defined I/O modules are key as well as computational horsepower for signal processing, both locally and in the cloud. With that in mind, below are some of the key trends influencing testing in 2017 and beyond.

1. Reconfigurable instrumentation.

According to NI, software-defined instrumentation based on a modular architecture is the best way toward achieving a high level of reconfigurability, which is critical for meeting the challenges of today’s IoT test applications. “This isn’t just changing API calls, but the ability to unlock FPGAs and write the same graphical code to build and test systems,” Foster explains. As reprogrammable silicon chips, FPGAs deliver pre-built logic blocks and programmable routing resources that allow engineers to easily reconfigure to implement custom hardware functionality. Today, new technologies that convert graphical block diagrams or C code into hardware circuitry allow more test engineers to make use of their functionality.

Managed test systems.

As more data is collected, there is a greater need to manage all of that data, ensuring it is stored in the right format and made available to the right test assets. A managed test system lowers test system integration risks, allowing for efficient resolution of issues; it streamlines test station deployments by ensuring fast and repeatable operation; and it lowers total cost of ownership of a test system by enabling the proactive monitoring and diagnosing of problems, the report contends.

Increasing reliance on hardware-in-loop (HIL) testing.

Thorough testing of embedded software across an exhaustive range of real-world scenarios is now standard operating procedure for many highly regulated industries, including medical devices, aerospace and defense, and automotive. To shorten time to market and lower test costs, hardware-in-the-loop testing has become a viable option for offloading test time in the field. The method allows companies to simulate real-world environments through modeling, providing closed-loop feedback to the controller being tested.

The idea of convergence—devices sporting a range of technologies—is driving much of the complexity necessitating new testing methods. “We’re getting to the point where there are no longer disparate things to test, but there are thousands of sensors, LDAR, radar and cameras, and you have to test everything at the same time,” says Foster. “We see software-defined testing, modular systems and managed test systems as the solution to testing converged systems and smart devices.”

Check out this video to learn more about NI’s hardware-in-the-loop technology.

Subscribe to our FREE magazine, FREE email newsletters or both!

About the Author

Beth Stackpole is a contributing editor to Digital Engineering. Send e-mail about this article to [email protected].

Follow DE